The Evolution of the Blockchain Consensus Protocol

Published September 24, 2019

Abstract

The case for adopting distributed ledger (blockchain) solutions is gaining ground in enterprises. In fact, 83% of enterprises now see compelling use cases for the technology. But not all distributed ledgers are equal. One of the biggest of differences lies in how consensus is achieved. We care about consensus because that is how everyone involved in the ledger decides that a transaction is verified and can be securely written to the immutable ledger. Bitcoin, the early use case for Blockchain, uses a proof-of-work consensus mechanism, where all the nodes have the same privilege. The idea was to create a network of equals with no centralized server/entity, nor any hierarchy.

While it is a great architecture for assuring that the four desired properties of blockchain technology (decentralization, security, transparency, immutability) are enabled, heavy experimentation and use across different cases since 2009 has uncovered a downside of the Bitcoin model.

These downsides, which are detailed later in this report, are primarily a result of the consensus model used. This has led to the development of different models for achieving consensus, which we will explain in this report. We’ll provide a technical level set as to what consensus is all about and how it applies to Blockchain and other distributed ledgers. We’ll then review several newer approaches to solve for consensus and data consistency across distributed systems. We’ll look at the strengths and weaknesses of these approaches and provide advice for large enterprises applying these consensus models in various scenarios.

Authors:

| Gary Zimmerman

CMO and Principal Consulting Analyst |

Executive Summary

The Blockchain is an undeniably ingenious invention – the brainchild of a person or group of people known by the pseudonym, Satoshi Nakamoto. It was originally defined as distributed ledger supporting the peer-to-peer electronic cash system (Bitcoin), but since then, it has evolved into something greater; an architecture for an exchange any kind of value through the Internet.

The peer-to-peer networks that support blockchains as well as other distributed ledgers are structured according to the consensus pattern they use. In some networks, the pattern is architected so that all the nodes have the same privilege. The idea is to create a flattened network of equals. In other networks, nodes take on different roles as the work of maintaining the database is divided up among them and some nodes that are granted more privilege and authority than others in more of a hierarchy. Regardless of the role they play in the network, the nodes do four things governed by the consensus model:

- They each receive, order, and validate transactions for their next candidate block.

- They compete to add their candidate block to the blockchain when it is completed.

- They validate the winner’s block and add it to their own full copy of the blockchain “database of record”.

- They communicate states and events with each other in order to maintain data consistency across the network.

In doing so, a blockchain network delivers “trust” as defined by the four desired properties of blockchain technology – decentralization, security, transparency, and immutability.

As you can see, Consensus models are primarily concerned with coming to an agreement on the ordering of events/transactions (and who gets to add them). Validation of the transactions is initially handled by the originating node (for some networks, a miner) before they are added to the block. And then once more by the rest of the Blockchain Validators when a block winner is picked. The nodes add the block, and the Blockchain Validators verify that the block is valid. If Consensus is reached, then the network successfully moves on to the next block.

While blockchains are garnering a lot of attention at the moment, they are not yet a universal replacement for centralized databases nor central administrating bodies for these reasons:

- Volatility: the structures, protocols, transactions, and lexicon in various ledgers are all non-standard and therefore, non-portable.

- Regulation: The uncertain landscape with grey areas and unstated governmental positions makes it hard for businesses to ultimately build compliant and trusted solutions based on the technology.

- Scalability: There’s significant uncertainty regarding the ability of blockchain technologies to reach a global efficiency to compete with established centralized solutions.

- Experience: Finally, designing new systems using distributed ledgers is a struggle for most enterprises and even for those that are experienced, integrating blockchain services within established processes is challenging.

Of these reasons, scalability is one frequently mentioned as blocking progress. The ability to scale (or lack thereof) a blockchain is ultimately the result of the consensus model used. The constraints on scalability can be defined in five dimensions.

- Transaction cost – How much does it cost to validate and record a transaction? This is a direct result of the incentives of the network and the ability to jump priority in the queue.

- Network throughput – What is the volume of transactions that can be processed by the network? How long does it take to completely validate and record a transaction?

- Database scalability – Are there limits caused by node costs, node performance (i.e. weakest link defines overall network performance), how many nodes can participate at what level, or database structure/storage constraints?

- Transaction finality – How long does it take to reach final data consistency across the network? What level of consistency is acceptable?

- Integrity risk – What conditions cause the database to be no longer trusted as a database of record?

Each of the consensus models has differing performance and constraints. This is why we provided details on various consensus models in this report. New or hybrid models are springing up all over to tackle one or more of the above constraints. Unfortunately, most of these are unproven outside the whitepaper first describing them or the first initial offering being used to fund further development.

We recommend, for now, that you focus your efforts on projects that solve specific problems rather than trying to predict the ultimate blockchain platform of the future. Blockchain is still three to five years away from feasibility at scale, primarily because of the difficulty of resolving the alternatives in the market and the establishment of common standards.

Beyond that standardization hurdle, the development of “purpose built” utility ledgers can complicate the situation. For example, a use case that wants to combine decentralized identity (Sovrin), a major cryptocurrency (Bitcoin), and smart contracts (Ethereum) may have to interact with three separate consensus models in order to accomplish its goals. For now, try to simplify your project in order to minimize the need to manage these different architectures. If that can’t be done, then look to isolate the interactions via techniques like APIs or microservices in order to reduce the impact of independent blockchain system evolution.

Finally, data consistency in an adversarial environment is something that developers of decentralized systems commonly face but may not necessarily understand. The specific consensus model used is at the heart of any decentralized system. It decides whether to commit a transaction to a distributed database, chooses a node as a leader that organizes the updates, recognizes and isolates bad behavior, and synchronizes state machine replicas and ensures consistency among them.

Bitcoin blockchain consensus is by far the most used and established consensus mechanism but its known limitations have spawned many different alternatives, each trying to solve for specific issues encountered. This report sheds light on those alternatives so that as you are investigating solutions, you can be aware of the tradeoffs of the different consensus patterns that underlay blockchains/ DLTs available in the market.

Why consider a decentralized system?

Before looking at the specifics of establishing consensus, we’ll look at why an enterprise should be considering using a decentralized system leveraging Blockchain or another form of distributed ledger. Blockchain’s core advantages are decentralization, cryptographic security, transparency, and immutability. It allows information to be verified and value to be exchanged without having to incur the restrictions, costs, and delays of relying on a third-party authorities or intermediaries. Rather than there being a singular form of blockchain, the technology can be configured in multiple ways to meet the objectives and commercial requirements of a particular use case. Blockchain might have the disruptive potential to be the basis of new operating models, but its initial impact will be to drive operational efficiencies. Cost can be taken out of existing processes by removing intermediaries or the administrative effort of record keeping and transaction reconciliation.

In addition to the intuitive business fit, there are three technical arguments in favor of decentralization and distributed ledger technology:

- Fault Tolerance: Because they rely on many separate components, decentralized systems are less likely to fail accidentally.

- Attack resistance: Due to the presence of a lot of players, decentralized systems lack central points of failure; there’s no one point of attack that would disarm the entire system. This makes it more expensive and less viable to destroy these systems through conventional Sybil or Denial of Service attacks.

- Collusion resistance: In contrast to centralized systems, it is highly unlikely for participants in a decentralized system to be able to collude for selfish goals. This means it is hard for an individual or company to push forward its own agenda.

Each of these properties scale with the level of decentralization in the network. The more decentralized the network, the more fault tolerant, attack resistant, and collusion resistant it becomes.

Decentralization concerns

However, decentralization, if not done properly, raises specific concerns that need to be assessed as you develop your enterprise architecture and strategy. The primary concerns are assuring data consistency and managing these systems and services in an adversarial environment.

Data consistency

Data consistency is a measure of uniformity of data as it moves across a network and between various applications and services. This uniformity in data maintains the accuracy and integrity of information stored on computers or across a network and ensures that the data does not violate application or network rules for valid data. Achieving consistency is more difficult in a decentralized environment. In order to maintain consistent routing data across the Web, rules for directory propagation, redirection, timing between cache refreshes (time to live) have been developed and standardized in order to keep the connections between domain names and IP addresses consistent across the Web. A perfect example of how the industry addresses data consistency can be found in the DNS system.

Adversarial Environment

Even when data consistency is addressed, not all of the problems of a decentralized system are solved. Any network or system that is not closed to external entities is an adversarial environment. An adversarial environment is characterized by the presence of malicious actors within a system or network, who undermine the system by using it in ways for which it was not intended. The prototypical adversary in a DLT system is an entity that attempts to exploit the consensus rules to transfer assets without authorization, censor others’ transactions, or otherwise disrupts the network. Adversaries may operate inside or outside the system. Bitcoin describes its solution to this as a “trustless” system because the system was designed so that nobody has to trust anybody else in order for the system to function. Other solutions apply governance over system admission to assure some level of trust, but still validate transactions in a “trust but verify” means to address the adversarial environment.

To solve for data consistency and to counter the adversarial environment of decentralized systems, DLT networks rely on a number of entities to agree on the validity of a transaction or operation by achieving a consensus. Depending on the environment and the rules for achieving consensus, there are certain conditions that must be met. We’ll now look at what consensus is and how it supports distributed ledger transactions.

Consensus

The consensus problem is a fundamental problem in the control of decentralized systems. How does every network participant (node) know it can trust the data? Blockchains rely on consensus models to assure trust.

The peer-to-peer networks that support blockchains as well as other distributed ledgers are structured according to the consensus model they use. In some networks, the model is architected so that all the nodes have the same privilege. The idea is to create a flattened network of equals. In other networks, nodes take on different roles as the work of maintaining the database is divided up among them and some nodes that are granted more privilege and authority than others in more of a hierarchy. Regardless of the role they play in the network, the nodes do four things governed by the consensus model:

- They each receive, order, and validate transactions for their next candidate block.

- They compete to add their candidate block to the blockchain when it is completed.

- They validate the winner’s block and add it to their own full copy of the blockchain “database of record”.

- They communicate states and events with each other in order to maintain data consistency across the network.

In doing so, a blockchain network delivers “trust” as defined by the four desired properties of blockchain technology – decentralization, security, transparency, and immutability.

As you can see, Consensus models are primarily concerned with coming to an agreement on the ordering of events/transactions (and who gets to add them). Validation of the transactions is initially handled by the originating node (for some networks, a miner) before they are added to the block. And then once more by the rest of the Blockchain Validators when a block winner is picked. The nodes add the block, and the Blockchain Validators verify that the block is also valid. If Consensus is reached, then the network successfully moves on to the next block.

Strong Consistency

Traditional Relational Database Management Systems, even in a distributed deployment, rely on Strong Consistency. This means that data will get passed on to all the replicas as soon as a write request comes to one of the replicas of the database. But during the time these replicas are being updated with new data, response to any subsequent read/write requests by any of the replicas are delayed as all replicas work to keep each other consistent. Strong Consistency offers up-to-date data but at the cost of high latency.

DLTs are built upon a different consistency model, one of eventual consistency. While eventual consistency offers low latency, the system may reply to read requests with stale data since all nodes of the database system may not have the updated data at the time of the read request. This is called transient inconsistencies and was, historically, an understood risk in many hierarchical identity services.

Eventual Consistency

As data is added to the system that is eventually consistent, the system’s state is gradually replicated across all nodes. For example, in Hadoop, when a file is written to the HDFS, the replicas of the data blocks are created in different data nodes after the original data blocks have been written. For the short period before the blocks are replicated, the state of the file system is inconsistent.

In order for any transaction to be confirmed within a DLT, it has to be approved by several nodes. Basically, this is the process of reaching consensus on activities conducted on a DLT platform. While the eventual consistency delivered through consensus is taken as “good enough” it is still probabilistic in that no consensus mechanism can provide complete safety and liveliness[1]. Different consensus structures use different means to increase the confidence that a transaction is valid and final, but in the end, 100% finality and consistency cannot be guaranteed.

Consensus algorithms and protocols

Now that you have a general understanding of consensus, let’s define two further concepts related to consensus — the protocol and algorithms. These two concepts will help you understand how consensus is achieved, and the component parts of any DLT implementation.

Consensus protocols

A protocol is a set of rules that govern how a system operates. The rules establish the basic functioning of the different parts, how they interact with each other, and what conditions are necessary for a robust implementation.

The different parts of a protocol are not sensitive to order or chronology — it doesn’t matter which part goes first. A protocol also doesn’t tell the system how to produce a result. It doesn’t have an objective and doesn’t produce an output.

In DLTs, the protocol:

- tells the nodes how to interact with each other (without telling them to do so)

- determines how data gets routed from one node to the next (without telling the data to move)

- defines what the blocks have to look like

- stipulates who decides which transactions are valid

- establishes how consensus is determined (without dictating the procedure)

- identifies who maintains the ledger

- delegates who determines how the rules of the system change

- decides if identities are needed

- determines who can create new coins/tokens (but not how)

- triggers procedures in case of error

Bitcoin, Ethereum, and Sovrin’s Plenum are protocols, not algorithms.

Consensus algorithms

A consensus algorithm is a process used to achieve agreement on a single data value among distributed processes or systems. Consensus algorithms are designed to achieve reliability in a network involving multiple unreliable nodes (aka an adversarial environment). Solving that issue — known as the consensus problem — is important in distributed computing and multi-agent systems.

Consensus algorithms relate to the rules (mathematics) that each node follows to achieve consensus. These algorithms describe the steps that will need to take place. For example, a proof of work (PoW) algorithm might define the rules such that the creator of the next block is chosen via its ability to solve a complex mathematical puzzle, a proof of stake (PoS) algorithm might define the rules such that the creator of the next block is chosen via various combinations of random selection, stake, or age.

Unlike a consensus protocol, which is a set of rules that determine how the system achieves consensus, an algorithm is a set of instructions that produce an output or a result. It can be a simple script or a complicated program. The order of the instructions is essential, and the algorithm specifies what that order is. It tells the system what to do to achieve the desired result.

To accommodate this reality, consensus algorithms necessarily assume that some processes and systems will be unavailable and that some communications will be lost. As a result, consensus algorithms must be fault-tolerant. They typically assume, for example, that only a portion of nodes will respond but require a response from that portion, for example, 51%, at a minimum for the Bitcoin blockchain.

The algorithm:

- verifies signatures

- confirms balances or asset status

- decides if a block is valid

- determines how miners validate a block

- establishes the procedure for telling a block to move

- establishes the procedure for creating new coins / tokens

- tells the system how to determine consensus

PoW, PoS, and RBFT are algorithms, not protocols.

Applications of consensus algorithms include:

- Validation – Deciding whether to commit a transaction to a distributed database.

- Selection – Designating a node as a leader for some distributed task.

- Finalization – Synchronizing state machine replicas and ensuring consistency among them.

Bitcoin sets the standard

Blockchain technology was first outlined in 1991 by Stuart Haber and W. Scott Stornetta, two researchers who wanted to implement a system where document timestamps could not be tampered with. But it wasn’t until almost two decades later, with the launch of Bitcoin in January 2009, that blockchain had its first real-world application.

The Bitcoin protocol is built on the blockchain. In a research paper introducing the digital currency, Bitcoin’s pseudonymous creator Satoshi Nakamoto referred to it as “a new electronic cash system that’s fully peer-to-peer, with no trusted third party.”

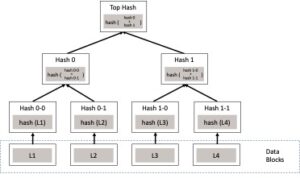

Blockchain technology was created in such a way that it is possible to reach consensus on actions conducted in the data storage system. In comparison to traditional data storage systems, blockchain provides a wider choice of ways to reach consensus. It uses data blocks and transaction mechanisms where various organizations (even mutually untrusting ones) can host different informational patterns. These parties (nodes, individuals, or organizations) constantly verify data integrity and validity via consensus algorithms to agree upon transaction execution. For this, a cryptographic audit is used which relies on the Merkle tree structure:

Figure 1 – Merkle tree

Eventual consistency is reached via an order-executive architecture. First, the blockchain orders transactions into blocks using a consensus protocol. Only after that can the transactions be executed sequentially, one after another.

Figure 2 – Blockchain structure

This type of approach is often the reason for performance issues in various blockchains. As transaction execution happens in a serial manner, it relies upon consensus algorithms that can be slow and computationally expensive.

Considering the above-mentioned problem of eventual consistency, DLT platforms are exploring alternative data structures and consensus mechanisms that are not as expensive to use and provide higher speed and transaction-execution throughput.

Consensus patterns

This report describes the various consensus patterns that are emerging. They are presented as patterns because every DLT implementation may have differing rules, algorithms, and governance, but can be generalized in the way they approach consensus into the patterns described below.

Consensus in public blockchains

When we discuss blockchains, what most people envision is a public open blockchain. It’s a blockchain where anybody can write data to the blockchain, and anybody else can read those data. Public blockchain platforms are what we also refer to as permissionless blockchain platforms, meaning that they really strive to, by design, increase ledger access while protecting the user’s anonymity. They are also the most reliant on code as the arbiter of truth. The protocols and algorithms are built to assure consistency when the generator of the transaction and the recorder of the block are unknown and untrusted.

Permissionless blockchains rely on economics and game theory incentives to ensure that everybody in the system behaves honestly and according to the rules. The consensus patterns set up situations of group consensus, through which honest participants are economically rewarded, and dishonest ones only incur work or cost, with no possibility of ever recouping that cost. The most robust permissionless consensus pattern is that of the Bitcoin Protocol and Proof of Work.

Proof of Work

Proof of Work (PoW) was the first consensus algorithm to create distributed trustless consensus and solves the double-spend problem.

The “Double Spend” problem is caused by the ability of computers to endlessly duplicate information. In the case of financial value, there are three important things to record: who owns a specific value; the time at which the person owns this value; the where (account or wallet) the value resides. When transferring financial value from one person to another, it is essential that if Alice sends an asset to Bob, Alice should not be able to duplicate the same asset and send it again to Carol.

PoW is not a new idea, but the way Satoshi combined this and other existing concepts — cryptographic signatures, Merkle trees, and P2P networks — into a viable distributed consensus system, of which cryptocurrency is the first and basic application, was quite innovative. PoW is a competitive consensus protocol rooted in game theory. Competitive consensus protocols select the node that can write a valid block to the chain by means of a contest. PoW is currently being used by Bitcoin, Ethereum, Litecoin, Dogecoin, and many others.

The way it works that the participants of the blockchain (called miners) have to solve a complex but useless computational problem in order to add a block of transactions into the blockchain.

Basically, it is done to ensure that the miners are spending resources (computing cycles) to do the work, which ensures that they won’t harm the blockchain system, because harming the system will result in losing their investment; thus, harming themselves.

The difficulty of the problem can be changed in runtime, to ensure the constant block time. Sometimes multiple miners solve the problem simultaneously. When this happens, it’s called a fork.

Figure 3 – Illustration of a blockchain fork

When such a fork occurs, the Longest Chain Rule is applied. The Longest Chain Rule is the determining factor whenever two competing versions of the blockchain history arise on the network. The rule simply states that whichever of the two versions grows longer first, wins. The other version is overwritten, and therefore all transactions and rewards on that version are erased. The simplicity of this rule is a key to understanding why PoW consensus mechanisms continue to be adopted even though performance problems exist. PoW blockchains are safe as long as more than 50% of the work being put in by miners is honest.

How Bitcoin mining works:

- A group of transactions are bundled into a memory pool (mempool).

- Miners verify each transaction in the mempool is legitimate by solving a mathematical puzzle.

- The first miner to solve the puzzle gets rewarded with newly minted bitcoin (the block reward) and network transaction fees.

- The verified mempool, now called a block, is attached to the blockchain.

The type of puzzle[2] miners must solve has a few key features that define the PoW system:

- The puzzles are asymmetric, meaning it is difficult for miners to solve but the correct answer is easily verified by the network.

- The puzzles have no skill involved; they require brute force. This ensures certain miners do not gain an unfair advantage over others. The only way for a miner to improve their odds of solving a puzzle is to acquire additional computational power; something that is very energy and capital intensive.

- The puzzle parameters are periodically updated in order to keep the block time consistent. The Bitcoin protocol, for example, has a block generation target time of 10 minutes[3]. So, for example, if the average block time over two weeks has decreased to below 10 minutes, the network will automatically increase the difficulty. This, in turn, increases the number of calculations and the average time required for the puzzle to be solved.

Pros:

- It has been tested in projects and prototypes since 2009 and is in limited production today.

- It has the highest level of adoption of any of the protocols contained in this report.

Cons:

- It’s slow. Bitcoin’s blocks contain the transactions on the bitcoin network. The on-chain transaction processing capacity of the bitcoin network is limited by the average block creation time of 10 minutes and the block size limit of 1 megabyte. These jointly constrain the network’s throughput. The transaction processing capacity maximum estimated using an average or median transaction size is between 3.3 and 7 transactions per second. Ethereum is faster, about 14 seconds per update because it uses smaller blocks that vary in size.

- It uses a lot of energy. The posterchild for this issue is Bitcoin. There are three things which drive Bitcoin’s power use: artificial scarcity leading to many, many miners participating, increasingly hard competition for the remaining few million coins, and its proof-of-work approach to immutability and validity. As shown in table 1, the mining of Bitcoin is currently using as much electrical power as Switzerland.

| Description | Value |

| Bitcoin’s current estimated annual electricity consumption (Terrawatt-hours[4]) | 62.67 |

| Bitcoin’s current minimum annual electricity consumption (Terrawatt-hours) | 38.28 |

| Annualized global mining revenues | $6,340,318,047 |

| Annualized estimated global mining costs | $3,133,363,020 |

| Current cost percentage | 49.42% |

| Country closest to Bitcoin in terms of electricity consumption | Switzerland |

| Electricity consumed per transaction (Kilowatt-hours) | 465 |

| Number of U.S. households that could be powered by Bitcoin energy consumption | 5,802,524 |

| Number of U.S. households powered for 1 day by the electricity consumed for a single transaction | 15.7 |

Table 1 – Energy consumption patterns for Bitcoin – source: Digiconomist

- It’s susceptible to concentration of network power. An increasing concern with blockchain networks utilizing Proof of Work systems is the risk of centralization. As noted earlier, the role of mining in Proof of Work systems is becoming increasingly reserved for large-scale operations. Control of blockchain networks is moving from the community at large to fewer and fewer hands, contrary to the notion of a decentralized Internet of Value. As shown in figure 5 below, the top five miners own 72.5% of the processing power. So, while it is theoretically possible for anyone to set up a node and participate, the financial incentives are skewed towards the larger players. Concentration also increases the likelihood of a 51% attack if the larger players collude to “game the system”.

Figure 4 – Concentration of mining capabilities

- It’s expensive. Transaction fees are included with your bitcoin transaction in order to have your transaction processed by a miner and confirmed by the Bitcoin network. The space available for transactions in a block is currently artificially limited to 1 MB in the Bitcoin network. This means that to get your transaction processed quickly you will have to outbid other users. Recent Bitcoin transaction fees (in dollars per transaction[5]) were:

- Next Block Fee – the fee to have your transaction mined on the next block (10 minutes to finality). $2.56

- 3 Blocks Fee – the fee to have your transaction mined within three blocks (30 minutes). $2.37

- 6 Blocks Fee– the fee to have your transaction mined within six blocks (1 hour). $0.27

These fees limit the utility of a blockchain for high volume, low value transactions such as IoT communications or smart contract execution.

Used by: Bitcoin, Ethereum, Litecoin, Dogecoin and hundreds of other projects.

Delayed Proof-of-Work

A variant of PoW is Delayed Proof of Work (dPoW). It is a hybrid consensus method that allows one blockchain to take advantage of the security provided through the hashing power of a secondary blockchain. A common implementation of this type of consensus is implemented through what is known as side, directed, or child chains. In these implementations, consensus is achieved through a group of notary nodes that add data from the sidechain onto the mainchain, which would then require both blockchains to be compromised to undermine the security of the first.

The blockchain relying on dPoW can make use of either the Proof of Work (PoW) or Proof of Stake (PoS) consensus algorithms to function; and it can attach itself to any PoW blockchain desired. However, Bitcoin’s hash rate currently provides the greatest level of security to blockchains being secured by dPoW.

There are typically two types of nodes within a dPoW system: notary (or watchman) nodes and normal (or functionary[6]) nodes. The notary nodes add (notarize) confirmed blocks from the dPoW blockchain onto the attached main PoW blockchain.

The dPoW system is designed to allow the side or child blockchain to continue functioning without the notary nodes. In such a situation, the dPoW blockchain can continue operating based upon its initial consensus method; however, it would no longer have the added security of the attached blockchain.

Pros:

- More energy efficient since a lot of the consensus activity happens in a smaller network outside of the main blockchain.

- Increased throughput due to the break in the critical dependence on the main chain consensus protocol for every transaction.

- Can add value to other blockchains by indirectly providing Bitcoin (or any secure chain) security without paying the cost of Bitcoin (or any secure chain) transactions

Cons:

- Systems deploying this architecture need to know more than one consensus protocol.

- Side chains may be more customized and therefore do not share the advantages of community support and adoption of a mainchain such as Bitcoin.

Used by: Liquid, Komodo, Plasma

Proof of Stake

Proof of Stake (PoS) systems have the same purpose of validating transactions and achieving consensus, however, the process is quite different than in Proof of Work systems. With Proof of Stake, there is no mathematical puzzle, instead, the creator of a new block is chosen in a deterministic way based on their stake. The stake is how many coins/tokens one possesses. For example, if one person were to stake 10 tokens and another person staked 50 tokens, the person staking 50 tokens would be 5 times more likely to be chosen as the next block validator.

Proof of Stake systems potentially provide a fairer solution. The amount of network control a participant can gain in a Proof of Stake system is directly proportional to how much they invest. If one participant invests ten times more than another participant, they will receive ten times the amount of control. On the contrary, under Proof of Work systems, if a miner invests 10 times more into equipment than another, they will actually receive more than 10 times the computational power. This comes as a result of bulk purchasing deals and the increased efficiency of high-end equipment. As noted earlier in this report, it is becoming increasingly less profitable and more difficult for individuals to compete against large mining farms.

A key advantage of the Proof of Stake system is higher energy efficiency. By cutting out the energy-intensive mining process, Proof of Stake systems may prove to be a much greener option compared to Proof of Work systems. Additionally, the economic incentives provided by Proof of Stake systems may do a better job of promoting network health. Under a Proof of Work system, a miner could potentially own zero of the coins they are mining, seeking only to maximize their own profits. In a Proof of Stake system, on the other hand, validators must own and support the currency they are verifying. These advantages and more will be discussed in detail below.

Another key distinction between Proof of Stake and Proof of Work is that under Proof of Stake there is no new coin creation (mining). Instead, all of the coins are created in the very beginning. This means the validators must be fully rewarded through transaction fees as opposed to newly minted coins.

But one issue that can arise is the “nothing-at-stake” problem, wherein block generators have nothing to lose by voting for multiple blockchain histories(forks), thereby preventing consensus, from being achieved.

Figure 5 – Nothing at stake problem

Constant forking of a blockchain is not healthy for a network and leads to instability. In Proof of Work systems, if a blockchain is forked, miners have to make the decision to continue supporting the original blockchain or switch to the newer forked blockchain. In order to support both sides of the fork, a miner would have to split their computational resources between the two. In this way, Proof of Work systems naturally discourage constant forking from occurring through an economic incentive.

Proof of Stake systems do not inherently discourage forking. When a blockchain forks, a validator will receive a duplicate copy of their stake on the newly forked blockchain. If a validator signs off on both sides of the fork, they could potentially claim twice the amount of transaction fees as a reward and double spend their coins; this is known as the ‘nothing at stake’ problem. A participant is not required to increase their stake in order to validate transactions on multiple copies of a blockchain, thus, there is no economic incentive preventing this bad behavior.

A potential solution to the ‘nothing at stake’ problem is to impose a deposit that will be locked for a period of time. Ethereum plans on switching from a Proof of Work system to a Proof of Stake system sometime in 2019, with a proposed consensus protocol called Casper. Casper will utilize a deposit solution in which validators are required to submit a minimum deposit in order to participate. If the protocol determines a participant has violated a set of rules, such as signing off on multiple forks, the deposit will be confiscated.

In the Proof of stake consensus algorithm, miners (called validators, delegates or forgers) are chosen or voted for randomly by holders of the native coin on the network.

When you hold a given amount of coins in your wallet for staking, your computer qualifies to be a node. For a node to be chosen as one of the stakers, it needs to have deposited a certain amount of coins in a bound wallet.

The chosen validators then stake the required amount of coins using the bound wallets. The node will forge or create new blocks proportional to the number of coins in their wallets. For instance, if you have 1% of all the coins, then you can “mine” 1% of the new blocks.

Different coins use a variety of PoS systems, but they all work the same by helping verify transactions and to secure the network. Validators get rewarded with block rewards as well as a share of the transaction fees collected per block.

Pros:

- Energy efficiency increased by the elimination of mining.

- More expensive to attack because of the investment in tokens required to participate.

- Not susceptible to economies of scale – In a POS a dollar is still a dollar, and big pools can’t get away with having more computing power for the same amount of money invested. However, smaller networks still are exposed to a type of economies of scale issues as noted below.

Cons:

- Nothing-at-stake problem requires the use of financial penalties as part of the protocol to enforce good behavior because there is no penalty built into the algorithm itself.

- Until PoS is widely adopted, the use of a centralized coordinator is required to maintain fairness in the system. Much like the “concentration of power problem” in PoW, if there are few participants, the ones with the highest stakes write the blocks and reap the rewards. This balances out as the network grows and the need for a coordinator eventually disappears.

- Just being adopted so it is not “battle tested”

Used by: Ethereum (soon), Peercoin, Nxt

Delegated Proof-of-Stake

In DPoS, the stake holders in the system can elect leaders(witnesses) who will vote on their behalf. This makes it faster than the normal PoS.

For example, in the case of EOS, 21 witnesses get elected at a time and a pool of nodes (potential witnesses) are kept at standby so that if one of the witness nodes faults or performs malicious activity, then it could be replaced by a new node immediately. The witnesses are paid a fee for producing blocks. The fee is set by the stake holders.

Usually all the nodes produce blocks one at a time in a round-robin fashion. This prevents a node from publishing consecutive blocks, preventing it from executing double-spending attacks. If a witness does not produce a block in their time slot, then that time slot is skipped, and the next witness produces the next block. If a witness continually misses his blocks or publishes invalid transactions, then the stakers vote it out and replace it with a new witness.

In DPoS, miners can collaborate to make blocks instead of competing like in PoW and PoS. By partially centralizing the creation of blocks, DPoS is able to run orders of magnitude faster than most other consensus algorithms.

Pros:

- Energy efficient.

- Faster than POW and POS. EOS has demonstrated a block commit time of 0.5 sec.

Cons:

- A bit centralized in that the validators are preselected to participate in the validate/commit cycle.

- Participants with high stakes can vote themselves as validator creating an unfair environment similar to the concentration of network power experienced in Bitcoin POW.

Used by: BitShares, Steemit, EOS, Lisk, Ark

Permissioned Consensus

What we have covered so far is consensus in public or permissionless blockchains. There is another category of systems called permissioned blockchains. A major difference between public and permissioned blockchain networks is in the area of trust. Having little or no knowledge as to the participants or verifiers in a network requires additional work to validate transactions. In a “trust-less” environment, every node has to verify every transaction and every block. Permissioned networks operate on the basis of “trust but verify” and as such, use lighter-weight consensus mechanisms as we describe below. These lighter weight mechanisms can often operate faster while still providing strong security given that the participants are known.

Consensus in permissioned systems may be implemented in different ways such as a lottery-based algorithm such as Proof of Elapsed Time (PoET) or through the use of voting-based methods including Redundant Byzantine Fault Tolerance (RBFT), Practical Byzantine Fault Tolerance (PBFT), LibraBFT, and Raft.

The lottery-based algorithms are advantageous in that they can scale to a large number of nodes since the winner of the lottery proposes a block and transmits it to the rest of the network for validation. On the other hand, these algorithms may lead to forking when two “winners” propose a block. Each fork must be resolved, which results in a longer time to finality.

The voting-based algorithms are advantageous in that they provide low-latency finality. When a majority of nodes validates a transaction or block, consensus exists, and finality occurs. Because voting-based algorithms typically require nodes to transfer messages to each of the other nodes on the network, the more nodes that exist on the network, the more time it takes to reach consensus. This results in a trade-off between scalability and speed.

Proof of Elapsed Time

PoET is a lottery-based consensus mechanism algorithm that is often used on the permissioned blockchain networks to decide the mining rights or the block winners on the network. Permissioned blockchain networks are those which require any prospective participant to identify themselves before they are allowed to join. Based on the principle of a fair lottery system where every single node is equally likely to be a winner, the PoET mechanism is based on spreading the chances of a winning fairly across the largest possible number of network participants.

The working of the PoET algorithm is as follows. Each participating node in the network is required to wait for a randomly chosen time period, and the first one to complete the designated waiting time wins the new block. Each node in the blockchain network generates a random wait time and goes to sleep for that specified duration. The one to wake up first — that is, the one with the shortest wait time — wakes up and commits a new block to the blockchain, broadcasting the necessary information to the whole peer network. The same process then repeats for the discovery of the next block.

The PoET network consensus mechanism needs to ensure two important factors. First, that the participating nodes genuinely select a time that is indeed random and not a shorter duration chosen purposely by the participants in order to win, and two, the winner has indeed completed the waiting time.

The PoET concept was invented in early 2016 by Intel. It offers a readymade high-tech tool to solve the computing problem of “random leader election.” Because it was designed to be used on a certain type of computer manufactured by the tech giant, called trusted execution environments (TEE). The ingrained mechanism allows applications to execute trusted code in a protected environment, and this ensures that both requirements — for randomly selecting the waiting time for all participating nodes and genuine completion of waiting time by the winning participant — are fulfilled.

The mechanism of running trusted code within a secure environment also takes care of many other necessities of the network. It ensures that the trusted code indeed runs within the secure environment and is not alterable by any external participant. It also ensures that the results are verifiable by external participants and entities, thereby enhancing transparency of the network consensus.

Pros:

- Low cost of participation, thus more people can participate easily, thus decentralized.

- It is simple for all participants to verify that the leader was legitimately selected.

- The cost of controlling the leader election process is proportional to the value gained from it.

Cons:

- Even though it’s cheap, you have to use Intel’s specialized hardware. This can affect standardization and adoption.

- May not be suited for public blockchains because of its reliance on a singular hardware vendor susceptible to internal corporate and/or government influence.

Used by: HyperLedger Sawtooth

Byzantine Fault Tolerance

The rest of the algorithms mentioned in this section are voting-based algorithms focused on solving the Byzantine generals’ problem. There’s this classic problem is distributed computing that’s usually explained with Byzantine generals. The problem is that several Byzantine generals and their respective portions of the Byzantine army and have surrounded a city. They must decide in unison whether or not to attack. If some generals attack without the others, their siege will end in tragedy. The generals are usually separated by distance and have to pass messages to communicate. In order to be successful, the generals need to reach consensus based on those exchanged messages.

Byzantine Failures

A Byzantine Fault is an arbitrary fault that occurs during the execution of an algorithm by a distributed system. It encompasses these faults that are commonly referred to as “crash failures” and “send and omission failures”. Byzantine failures may be loosely categorized as follows:

- a failure to take another step in the algorithm, also known as a crash failure. A failure may be due to arbitrary faults such as accidental (hardware failure), or malicious (compromised network mode)

- arbitrary execution of a step other than the one indicated by the algorithm

Several DLT protocols use some version of BFT to manage Byzantine Failures and come to consensus, each with their own pros and cons.

Practical Byzantine Fault Tolerance (PBFT):

One of the first solutions to this problem was coined Practical Byzantine Fault Tolerance. Currently in use by Hyperledger Fabric, with few pre-selected generals PBFT runs incredibly efficiently.

These algorithms are primarily used for achieving consensus among a limited group of nodes (In the case of multisignature between few participants — or in the case of BFT, between dozens of them, usually equals). It makes sense to use BFT when all the parties in the process know each other, and the list of them doesn’t change often. One example would be Walmart’s food traceability system. Walmart can, as of the writing of this report, trace the origin of over 25 products from 5 different suppliers using a system powered by Hyperledger Fabric and expects to roll out this solution to all of its established food vendors over time.

Pros:

- High transaction throughput

- Provides transaction finality without the need for confirmations. If a proposed block is agreed upon by the appropriate number of nodes in a pBFT system, then that block is final. This is enabled by the fact that all honest nodes are agreeing on the state of the system at that specific time as a result of their communication with each other.

- Energy efficiency

Cons:

- Centralized/permissioned

- The model only works well in its classical form with small consensus group sizes due to the cumbersome amount of communication that is required between the nodes.

- The pBFT model is also susceptible to sybil attacks where a single party can create or manipulate a large number of identities (nodes in the network), thus compromising the network. This is mitigated by larger network sizes, but scalability and the high-throughput ability of the pBFT model is reduced with larger sizes and thus compromises one of the advantages of the protocol.

Used by: HyperLedger Fabric

Federated Byzantine Agreement (FBA):

FBA is another class of solutions to the Byzantine generals’ problem used by currencies like Stellar and Ripple. The general idea is that every Byzantine general, responsible for their own chain, sorts messages as they come in to establish truth. In Ripple the generals (validators) are pre-selected by the Ripple foundation. In Stellar, anyone can be a validator, so you choose which validators to trust. FBA has been lauded for its incredible throughput, low transaction cost, and network scalability

FBA, which was first introduced by Ripple, and then formally proven by Stellar, permits reaching consensus among large numbers of participants who don’t know each other personally, and in situations when the total number of participants may not even be known. Each participant extends to trust to only a limited (by number) group of other participants, and therefore achieves consensus only amongst a narrow circle. However, since each of these circles has some element of overlap with others, it’s possible to achieve overall consensus.

Pros:

- Highest transaction throughput of all of the BFT protocols

- Consensus does not require a full quorum to agree, only the ones chosen by the participant.

Cons:

- Centralized/permissioned.

- Primarily used by payment rails, not general distributed ledgers.

Used by: Ripple, Stellar

Redundant Byzantine Fault Tolerance

Explanation: Redundant Byzantine Fault Tolerance, a consensus algorithm proposed by Pierre-Louis Aublin, Sonia Ben Mokhtar, and Vivien Quéma. As described in their paper, existing BFT protocols use a special replica, called the “primary”, which indicates to other replicas the order in which requests should be processed. This primary can be smartly malicious and degrade the performance of the system without being detected by correct replicas. Evaluations show that RBFT performs as well as other BFT protocols when there is no failure and that, under faults, its performance remains at 97%, whereas fault degradation can be as much as 78% for the other BFT protocols.”

RBFT implements a new approach whereby multiple instances of the protocol run simultaneously, a Master instance, and one or more Backup instances. All the instances order the requests, but only the requests ordered by the Master instance are actually executed. All nodes monitor the Master and compare its performance with that of the Backup instances. If the Master does not perform acceptably, it is considered malicious and replaced.

Pros:

- Fast. Scalable.

- Solves the malicious primary problem. Remains a high performer even under faulty conditions.

Cons:

- Best suited for permissioned or private blockchains

- The system is size constrained in that adding too many nodes significantly reduces throughput.

- Relatively new implementation of a BFT protocol

Used by: HyperLedger Indy, Sovrin

LibraBFT

On 18 June 2019 Facebook announced the launch “Project Libra,” a new type of digital money designed for the billions of people using its apps and social network. The project is based in blockchain technology with an architecture similar to Ethereum including smart contracts, transaction incentives (gas), and a programming language (Move). Underlying the ledger is a modified BFT consensus protocol named LibraBFT.

LibraBFT belongs to the family of leader-based consensus protocols. In leader-based protocols, validators make progress in rounds, each having a designated validator called a leader. Leaders are responsible for proposing new blocks and obtaining signed votes from the validators on their proposals.

LibraBFT operates in rounds, where a round is a communication phase with a single designated leader, and leader proposals are organized into a chain using cryptographic hashes. During a round, the leader proposes a block that extends the longest chain it knows. If the proposal is valid and timely, each honest node will sign it and send a vote back to the leader. After the leader has received enough votes to reach a quorum, it aggregates the votes into a Quorum Certificate (QC) that extends the same chain again. The QC is broadcast to every node. If the leader fails to assemble a QC, participants will timeout and move to the next round. Eventually, enough blocks and QCs will extend the chain in a timely manner, and a block will match the commit rule of the protocol. When this happens, the chain of uncommitted blocks up to the matching block become committed.

LibraBFT improves the performance of typical BFT protocols by reducing the amount of internode communication required to validate and commit a block. It does this by focusing the broadcasts of changes to be authenticated to a subset of the network, and then waiting until the minimum number of responses is received to propose a commitment.

LibraBFT is categorized in this report as a BFT protocol even though the whitepapers suggest that it can eventually be a POS protocol. Until this position is further detailed, including the method of staking, leader election, and governance (rewards and punishment for network behavior) it is best to think of Libra as a permissioned BFT blockchain.

Pros:

- Scalable. It is estimated to be able to scale to about 1,000 transactions per second

- Simplifies the leader selection issue

- Leverages the largest social network as its embedded base.

- Can be extended into a permissionless protocol in the future

Cons:

- Best suited for permissioned or private blockchains at this time

- Built around a “central bank” concept called the reserve

- Untested governance and implementation

- Heavy scrutiny by governments as it is considered a threat to established banking systems and regulation

Used by: Libra

RAFT

Raft is a consensus algorithm designed as an alternative to Paxos[7]. It was meant to be more understandable than Paxos by means of separation of logic, but it is also formally proven safe and offers some additional features. Raft offers a generic way to distribute a state machine across a cluster of computing systems, ensuring that each node in the cluster agrees upon the same series of state transitions.

Raft achieves consensus via an elected leader. A server in a raft cluster is either a leader or a follower and can be a candidate in the precise case of an election (leader unavailable). The leader is responsible for log replication to the followers. It regularly informs the followers of its existence by sending a heartbeat message. Each follower has a timeout (typically between 150 and 300 ms) in which it expects the heartbeat from the leader. The timeout is reset on receiving the heartbeat. If no heartbeat is received the follower changes its status to candidate and starts a leader election.

Pros:

- Simpler model than Paxos but offers same safety.

- Implementation available in many languages.

Cons:

- Usually used in private, permissioned networks.

Used by: Corda and Quorum

Directed Acyclic Graphs

Regardless of the consensus pattern adopted from the ones described above, the scalability issue remains at the front and center of the discussions within the crypto community. Applications that require scalability in the thousands of transactions per second or support for micropayments where the cost of processing cannot exceed the value exchanged simply can’t be accommodated by any of the current blockchain solutions. That’s why Directed Acyclic Graphs (DAGs) began appearing in the DLT space in 2015.

Directed Acyclic Graph (DAG) technology removes the constraints of throughput and cost by redefining the basic structure of the block-oriented database and the protocol used within the network. Before we explain the concepts used in Directed Acyclic Graph distributed ledger, let’s understand how it is different from Blockchain.

| Attribute | Blockchain | Directed Acyclic Graph (DAG) |

| Transaction handling | Transactions are grouped into blocks and are sequentially added into a chain. | Transactions are not grouped together. Each transaction is processed individually and then linked transaction to transaction rather than reprocessed and connected block to block. |

| Transaction validation | Requires the process of mining to validate transactions. Proof of work from miners who manage to find a valid hash is needed. Each validated transaction, however, incents the miner with a cryptocurrency reward. | Users in the network confirm each other’s transactions. Each transaction carries its own Proof of Work, and each new transaction validates at least one previous transaction. Without the need for miners, however, there is no explicit incentive / deterrent in the system. |

| Energy consumption | Because the technology relies on every miner in the network competing to complete the proof in order to get the right and rewards for writing the next block, the system requires high energy consumption to mine a single block. | Since each transaction carries its own proof of work, no mining is involved and therefore dramatically reduces the amount of energy required. |

| Speed | Best transaction confirmation time is 10 minutes. | Average confirmation time is 30 seconds. |

| Reliability | Centralization of mining due to the increasing presence of mining pools could potentially lead to such groups having control on the majority of the network mining power and increasing the likelihood of double spending. | It’s essentially a distributed peer-confirming system. Transaction orders are through multiple transactions due to the nature of the DAG structure, decreasing the likelihood of double spending. |

Table 2- Comparison of Blockchain and DAG behavior

DAG stands of a Directed Acyclic Graph. It is a directed graph data structure that uses a topological ordering. The sequence can only go from earlier to later. DAG is often applied to problems related to data processing, scheduling, finding the best route in navigation, and data compression.

In general, it all comes down to a kind of web, consisting of nodes connected to each other with edges. An edge is basically a connection between nodes with a specific direction. It is not possible to traverse it in the opposite direction. Acyclic means that it’s not possible to encounter the same node for the second time when moving from node to node by following the edges. In other words, it is non-circular.

One of the differences lays in the data structure. Instead of adding blocks sequentially to a chain, uses its Direct Acyclic Graph (or web). So, validation is parallelized which results in higher throughput.

DAG works differently than the blockchain. Whereas the blockchain requires Proof of Work from miners on every transaction, the DAG gets around this by getting rid of the block entirely. Instead, DAG transactions are linked from one to another, meaning one transaction confirms the next and so on. These links are where the term DAG comes from, just like blocks getting hashed are where the blockchain receives its name.

Figure 6 – Graph structure example

Directed Acyclic Graph is a data structure which implements the approach of topological ordering. DAG is usually correlated to problems of data processing, finding the best route in navigation, data compression, and scheduling.

When a transaction is registered in a node, it first has to verify two other transactions before its transaction will be verified. Those two transactions are chosen according to an algorithm. The node has to check if the two transactions are not conflicting. For a node to issue a valid transaction, it must solve a hashcash cryptographic puzzle similar to those in the Bitcoin network (Proof of Work). Just two verifications are needed to verify a transaction. This gives the benefit of a drastic decrease in unnecessary verification.

Pros:

- Quick Transactions. Due to its blockless nature, the transactions run directly into the DAG networks. The whole process is much faster than those of blockchains based on PoW and PoS. For example, A Hashgraph transaction performance test was conducted on Amazon AWS across five continents and over eight regions. The test confirmed a Hashgraph DAG can validate and confirm more than 50,000 transactions in a second.

- No Mining Involved. There are no miners on DAG networks. The validation of transactions goes directly to the transactions themselves. For users, this means transactions go through almost instantly.

- Supports Small or Zero Payments. The idea behind introducing DAG technology is to make a network functional and smooth with minimum transaction fees. It can become possible for users to send micro-payments without paying heavy prices, unlike Ethereum and Bitcoin.

- Time of confirmation and speed of execution are not dependent on the block-size, but on the bandwidth between communicating nodes. Therefore, there is no restriction on how much the system can scale.

Cons:

- Smart contract implementation may require the use of oracles. In DAGs, the order between transactions is not deterministic but correct ledger state calculation is not impacted because the addition and subtraction of an account’s token (cryptocurrency) balance can satisfy math’s commutative law. As long as the balance of any account is not less than 0, the order of transactions is not essential at all. Therefore, no matter how the DAG ledger is traversed, the final calculated account balance will be the same. In other words, any full node can restore the correct account state from the DAG ledger. However, smart contracts are programs that make assumptions about transaction precedence and timing (think about transactions triggered on the basis of scheduled events happening or escrow accounts that are settled when a set of conditions arise). This can be complex to accomplish without using an outside process to gather and order the transactions, especially in light nodes where only a subset of the ledger resides.

- Low transaction rates can lead to double spend vulnerabilities because a network participant with enough computing power could flood the network and corrupt the tip selection and validation process. At higher rates this becomes less of a possibility.

- DAGs remain unproven as they are a newer DLT technology.

Used by: IOTA, NANO, Byteball, Hashgraph

While the jury is still out on DAGs, it is clear that the limitations of the current blockchain architecture will need to be addressed before mass adoption can take place.

Recommendations

Despite all the benefits, blockchain adoption is still requires a deep understanding of technology and development features. The blockchain movement is similar to the early days of the Web, where inventors and pioneers are searching for proprietary answers to specific problems, even as they strive to create the next great Internet platform. While the projects are all being developed as open source projects, the rules, governance, and specifications remain unique. Broad adoption of blockchain as a platform will require us to step back and abstract the architecture into component layers as was done in the network OSI model. Abstractions and standardization should be developed around many areas including:

- Internode communications

- Consensus

- Smart contracts and virtual machines

- Serializers, compilers, programming languages

- Encryption and key management

- Off-chain interactions (side chains, oracles, etc.)

- Block/record structure, semantics, and access[8]

- Block/record identifier and capability discovery/directories

The lack of standards and interoperability makes choosing a DLT platform similar to choosing between Betamax and VHS recording formats[9]; if you choose incorrectly, you may have to eventually redevelop your capabilities around a different technology as the market shakes out. We recommend, for now, that you focus your efforts on projects that solve specific problems rather than trying to predict the blockchain platform of the future. Blockchain is still three to five years away from feasibility at scale, primarily because of the difficulty of resolving the “coopetition” in the market and the establishment of common standards.

Beyond that standardization hurdle, the development of “purpose built” ledgers can complicate the situation. For example, a use case that wants to combine decentralized identity (Sovrin), a major cryptocurrency (Bitcoin), and smart contracts (Ethereum) may have to interact with three separate blockchain architectures in order to accomplish its goals. Try to simplify your project in order to minimize the need to manage these different architectures. If that can’t be done, then look to isolate the interactions via techniques like APIs or microservices in order to reduce the impact of independent blockchain system evolution.

Finally, data consistency in an adversarial environment is something that developers of decentralized systems commonly face but may not necessarily understand. The specific consensus protocol and algorithm used is at the heart of any decentralized system. It decides whether to commit a transaction to a distributed database, chooses a node as a leader that organizes the updates, recognizes and isolates bad behavior, and synchronizes state machine replicas and ensures consistency among them. Bitcoin blockchain consensus is by far the most used and established consensus mechanism but its known limitations have spawned many different alternatives, each trying to solve for specific issues encountered. Each of the consensus patterns have differing performance, safety, and liveness attributes. This is why we provided details on various consensus patterns in this report.

As you look to choose (or develop) a blockchain network for your project, you need to take into consideration a number of factors determined by the consensus mechanism of the DLT system chosen.

- Scalability: As the number of participants and transactions increase, a DLT network must be able to grow to adapt to this growth. Scaling challenges have become a major issue for blockchain projects such as Bitcoin and Ethereum whose transaction speeds have an established upper limit. Other platforms promise better performance and should be evaluated as alternatives if transaction performance is critical.

- Security: Most of the platforms are just starting to come online[10] and as such, need to be evaluated as you might any new technology that hasn’t been stress-tested through years of use. Risk mitigation through recovery and backup capabilities focused around DLT failure or malicious behavior needs to be part of your planning.

- Transaction costs: Recording on Bitcoin or Ethereum is expensive due to the incentives built into the protocol. That might be ok if the transactions are few and the value outweighs the cost. However, applications such as IoT need to have low latency low cost performance that cannot be provided by these mainstream ledger platforms. Look for other platforms such as DAGs as an alternative.

Beyond the technology itself, look at other factors when choosing a blockchain network.

- Community Support: An acceptable level of support is crucial for blockchain development. How accessible is the platform’s community to provide feedback and support?

- Skill Availability: Blockchain is still considered to be in its infancy and much of the programming language and algorithms are new — which platforms allow developers to work in a coding language they already know? For new languages, how extensive is the documentation and training that is available?

- Multifunctionality and Adaptability: Will the blockchain platform you choose need to adapt to an existing technology? What functions would it require? Compatibility issues can hinder the smooth operation of blockchain systems within an enterprise. To avoid this, companies must ensure that their intended blockchain platform can efficiently integrate with the already existing business systems of the enterprise.

The current wave of blockchain technology not only promises to bring new technological solutions but has an equal promise to re-think and re-standardize existing processes, remove friction and lower costs. In fact, our analysis suggests the Blockchain’s short-term value will be predominantly in reducing cost before creating transformative business models.

With the right strategic approach, companies can start extracting value in the short term. Pick the right blockchain system based on the factors above, and look at how you can shape the ecosystem, establish standards, and address regulatory barriers to ensure the system can meet your needs as it matures.

Glossary

Bound wallet – An address where a node places a number of tokens that cannot be spent while the node is part of the blockchain validation process. Participating in the validation process allows the node to collect block creation and transaction fees from the network.

Consensus – Consensus the process of agreeing on one result among a group of participants within an adversarial environment to guarantee data consistency. It is further proven mathematically using the following attributes:

- Agreement – If any correct process believes that V is the correct answer, then all correct processes believe V is the correct answer.

- Validity – If V is the consensus value, then some process proposed V.

- Termination – Eventually, every correct process decides some value V.

Denial of Service Attack – is a cyber-attack in which the perpetrator seeks to make a machine or network resource unavailable to its intended users by temporarily or indefinitely disrupting services of a host connected to the Internet.

In a distributed denial-of-service attack (DDoS attack), the incoming traffic flooding the victim originates from many different sources. This effectively makes it impossible to stop the attack simply by blocking a single source.

A DoS or DDoS attack is analogous to a group of people crowding the entry door of a shop, making it hard for legitimate customers to enter, thus disrupting trade.

Decentralization – In a decentralized system, the information is not stored by one single entity. In fact, every node in the network owns the information.

Fork – Forks can be classified as accidental or intentional. Accidental fork happens when two or more miners find a block at nearly the same time. The fork is resolved when subsequent block(s) are added and one of the chains becomes longer than the alternative(s). The network abandons the blocks that are not in the longest chain (they are called orphanedblocks).

Full node – In a DAG, a full node has a complete copy of the DAG stored. It has to constantly be online. In a full node, one’s own transactions will be processed more quickly.

Hashcash – Hashcash is a proof-of-work algorithm originally used to limit email spam and denial-of-service attacks, and more recently has become known for its use in bitcoin (and other cryptocurrencies) as part of the mining algorithm. Hashcash is a cryptographic hash-based proof-of-work algorithm that requires a selectable amount of work to compute, but the proof can be verified efficiently.

Immutability – Immutability, in the context of the blockchain, means that once something has been entered into the blockchain, it cannot be tampered with.

Light node – Depends on full nodes to validate its transactions. Slower to get one’s transactions performed. Does not require a lot of storage and does not need to be online all the time. This is important in the context of IoT and other small internal storage devices (mobile devices).

Mempool – The memory pool is a “waiting area” for Bitcoin transactions that each full node maintains for itself. After a transaction is verified by a node, it waits inside the mempool until it’s picked up by a Bitcoin miner and inserted into a block. When a node receives the latest mined block from the miner, it removes all the transactions contained in this block from its mempool and begins to fill it again for the next update cycle.

Multisignature – refers to requiring more than one digital signature (key) to authorize a DLT transaction. These are often referred to as M-of-N transactions. The idea is that tokens become “encumbered” by providing addresses of multiple parties, thus requiring cooperation of those parties in order to do anything with them. These parties can be people, institutions or smart contracts.

Oracle – Blockchains cannot access data outside their network. An oracle is a data feed – provided by third party service – designed for use in smart contracts on the blockchain. Oracles provide external data and trigger smart contract executions when pre-defined conditions meet. Such condition could be any data like weather temperature, successful payment, price fluctuations, etc.

Payment rail – a payment rail is a payment platform or a payment network that moves money from payer to payee. Either party could be a consumer or business, and both parties are able to move funds on the network.

Project – Most of the DLT efforts outlined in this report are called projects because they are organized efforts focused on solving a specific problem. Bitcoin was developed as an alternative to central banking. Ethereum was developed to be a ‘World Computer’ that would disrupt the existing client-server model of the Internet.

Quorum – A Byzantine Agreement is reached when a certain minimum number of nodes (known as a quorum) agrees that the solution presented is correct, thereby validating a block and allowing its inclusion on the blockchain.

Quorum Certificate – Within the LibraBFT protocol, a leader is charged with gathering block validation “votes” from the minimum number of validators to ensure the safety of the block. Once the correct number of votes are received, the leader produces a quorum certificate that is broadcast to the entire validator pool to begin the protocol’s three-step commit process.

Security – In DLT, security is based in cryptography. The use of data encryption, hashing, and digital signatures help to ensure the transactions are protected and remain unaltered.

Shard – Blockchain sharding is where we split the entire state of the network into a bunch of partitions called shards that contain their own independent piece of state and transaction history. In this system, certain nodes would process transactions only for certain shards, allowing the throughput of transactions processed in total across all shards to be much higher than having a single shard do all the work as a mainchain (i.e. Bitcoin, Ethereum) does now.

Sybil attack – is an attempt to control a peer network by creating multiple fake identities. To outside observers, these fake identities appear to be unique users. However, behind the scenes, a single entity controls many identities at once. As a result, that entity can influence the network through additional voting power in a democratic network, or echo chamber messaging in a social network.

Tip – In a DAG, the tip is an unapproved transaction

Transparency – In blockchain systems, a person’s identity is hidden via complex cryptography and represented only by their public address. So, while the person’s real identity is secure, all the transactions that were done by that public address are visible via the blockchain. This level of transparency adds an extra level of accountability.

Trustless – Blockchains are considered “trustless,” because there are mechanisms in place by which all parties in the system can reach a consensus on what the canonical truth is. Power and trust are distributed (or shared) among the network’s stakeholders (e.g. developers, miners, and consumers), rather than concentrated in a single individual or entity.

Validation – Every computer in the network checks (validates) the transaction against some validation rules that are set by the creators of the specific blockchain network.

About TechVision