Know Your Agent: IAM Support for AI

Publication Date: 30 January 2025

Abstract

AI offers game-changing benefits for individuals and enterprises but also exposes organizations to potentially devastating risks. One of the greatest AI-related risks and the focus of this paper is the management and security of AI agents (Agentic AI) that are designed to take proactive, autonomous actions on behalf of a wide range of stakeholders. From a security perspective, any agent that takes autonomous action must be clearly identified, understood, managed, and governed and the right IAM foundation can play a major role in supporting this. The time to address Agentic AI is now for most enterprises in that it is increasingly being rolled out by organizations in a largely ungoverned manner.

In this report, TechVision Research identifies the overall agentic AI risk management objectives in concert with existing process and technology infrastructure. We also highlight critical mitigation techniques and controls that can prevent the inadvertent enablement of agentic AI to cause significant damage to your organization. These insights will help you establish a philosophy of “know your agent” (KYA) so that organizations are put in positions to succeed while limiting the “blast radius” from a bot-induced mistake or nefarious action. With over 30 years of helping our customers identify and mitigate these risks, TechVision can help with this process.

Authors:

Doug Simmons Gary Rowe

Principal Consulting Analyst CEO/Principal Consulting Analyst

[email protected] [email protected]

Executive Summary

As we rush headlong into Web/Internet 3.0, there is this great unknown called Artificial Intelligence (yes, AI) that is promising to make our (humans’) world so much better. There are plenty of people who say they “know AI” but in reality, nobody really knows what these AI agents or bots are ultimately going to do to us humans and enterprises. Sure, they will help in many ways (at least initially) by performing complex or mundane tasks that humans have tried to do or have done, respectively. No issues there. But here is the quandary: “Agentic AI” is now being rolled out by organizations of all shapes and sizes in all industries in a largely ungoverned manner.

We remember warning organizations against the threat of “shadow IT” growing exponentially as divisions or departments within organizations were rushing to “get something on the cloud” that could be rolled out super quickly and relatively cheaply. In this “model”, it was all about time-to-market and the common refrain was “we will bolt on security as soon as possible – we just have to get this application out there, or else we’ll be replaced by someone who will!” Fear motivates.

In 2025 we have AI large language models (LLMs)/large language processing, generative AI, Deep Learning and Self-aware AI – all of which are being incorporated into “agents” or bots. These agents or bots are AI-immersive applications that can become exceedingly dangerous because we are not governing them properly before putting them into production. Furthermore, AI is moving from providing insights and predictions to taking action (in a perfect world) on our behalf…but what if the action is not in the interest of the individual or enterprise? That is where a combination of modern IAM that understands agentic AI objects, new security approaches and new governance models are needed and will be the thrust of TechVision’s focus (and hopefully enterprise and vendor focus) in 2025.

This has the very real potential to become the “shadow IT” of the Death Star. This is not gaslighting. This is not the Angel of Doom speaking. We have proven that as a species, we don’t really know how or want to know how to protect ourselves from the unintended consequences of our digital existence if such protection gets in the way of time-to-market- to say it mildly.

Agentic AI has the real potential to dramatically exacerbate organizations’ insider threat risk, because AI agents are “insiders”. We are creating AI agents in our image – warts and all. But it’s worse than that: AI agents can harm us by:

- Requiring large amounts of data that may compromise privacy or expose confidential information

- Being targets for bad actors if they are trained with proprietary data.

- Collaborating or colluding in harmful ways if agents are compromised in any number of ways, such as via influence operations and social engineering (just like us humans).

- Hallucinate and provide erroneous outputs due to the lack of enough reliable data to train AI systems, especially for complex tasks.

To name just a few of the very real threats.

Know Your Agent (KYA) and Identity and Access Management (IAM) are two sides of the same “information protection coin”:

- We define KYA as the process of identifying, analyzing, and addressing the risks associated with agentic AI behavior as it relates to an organization’s information management, access, processes and procedures – just like we do with the workers.

- Identity and Access Management (IAM) has been a cornerstone of cybersecurity since the inception of modern computing – as well as today’s Zero Trust authentication and access control These bedrock principles apply to agentic AI every bit as much as they apply to human workers.

Agentic AI design and deployment governance must therefore have direct interaction with the enterprise IAM system(s) to Know Your Agentic AI. This IAM visibility includes accurately and temporally facilitating access to sensitive information and configuration capabilities. For example, an AI agent designed to analyze financial market data might need access to a specific database within a company’s system. IAM systems need to be able to identify and authenticate AI agents, which can be complex due to their dynamic nature and potential for rapid evolution. IAM would define the agent’s unique identity and grant it the necessary permissions to access the data while restricting access to other sensitive areas. This simple example should illustrate how mature IAM can lessen your risks associated with agentic AI error or malfeasance.

Many, if not all, enterprises today have some IAM infrastructure and are likely embarking on a Zero Trust strategy that leverages the existing IAM (and IGA / PAM) foundation. We strongly recommend that you Know Your Agentic AI in much the same way as the global financial services community has determined they Know Your Customer.

You must ensure your technical controls are reasonable and prudent regarding the threats, vulnerabilities, and potential consequences your risk appetite avails. Indeed, a balance must be struck between KYA and IAM to best ensure your potential breaches will be identified and mitigated before they happen. There are many advancements in the IAM capabilities of IGA, PAM, UEBA, risk scoring, trust scoring and emerging agentic AI security that can provide most organizations with the technology needed to reduce agentic AI risk. Do not delay getting your arms around this potentially crushing risk that is already alive, well and hungry.

One example of how an IAM vendor (Ping Identity) is rapidly ratcheting up agentic AI security support is through their Helix-branded IAM suite that has been enabled to configure AI agents with their own identities. As Ping describes, “these agents are more than just automated processes; they are sophisticated entities capable of understanding their operational contexts that interact securely with users. By endowing AI agents with distinct identities, Helix ensures that every interaction is authenticated and authorized in real-time, creating a new level of trust and security in AI operations.”

Other prominent IAM vendors that support AI agents include Okta, OneLogin, CyberArk, IBM, and BeyondTrust; with OneLogin being particularly recognized for its AI-backed adaptive authentication features and capabilities for managing access to applications with AI-driven decision making. Subsequent research by TechVision will investigate in more detail what other similar vendors are doing to bring their IAM (and IGA) solutions up to “agentic AI standards”.

Additionally, we will look at some of the startup companies coming onto market with specific focus on agentic AI risk management (e.g., lumenova.ai), as these types of solutions will need to integrate with and leverage the IAM / IGA infrastructure for work reliably.

In summary, TechVision Research identifies the overall agentic AI risk management objectives in concert with existing process and technology infrastructure. We also highlight critical mitigation techniques and controls that can prevent the inadvertent enablement of agentic AI to cause significant damage to your organization. These insights will help you establish a philosophy of “know your agent” (KYA) so that people are put in positions to succeed while limiting the “blast radius” from a bot-induced mistake or nefarious action.

With over 30 years of helping our customers identify and mitigate these risks, TechVision can help with this process.

Introduction

In our recent report titled, “Know Your Worker – The Intersection of Worker Risk and IAM”, we discussed how humans today don’t typically have the cyber literacy to fend off complex influence operations and social engineering attacks that put the organizations’ information, brand, and reputation at great risk. And that according to Verizon’s 2024 Breach Investigation Report released in March 2024, such human worker failures account for 75% of all data breaches and ransomware attacks today, either by human negligence or malfeasance. Now we are creating an agentic AI environment where these same failings are present.

To paraphrase from this same report, “to effectively address this most significant risk (i.e., insider threats), organizations must know precisely “who the AI agents are and what they intend to do”. Thus, a new axiom is born: “Know Your Agentic AI”, or KYA.

In this report, TechVision Research identifies the overall agentic AI risk management objectives in concert with existing process and technology infrastructure. We also highlight critical mitigation techniques and controls that can prevent the inadvertent enablement of agentic AI to cause significant damage to your organization. These insights will help you establish a philosophy of “know your agent” (KYA) so that people are put in positions to succeed while limiting the “blast radius” from a bot-induced mistake or nefarious action. We then conclude this review with a set of pragmatic recommendations and an enterprise action plan. We’ll start this process by examining Agentic AI risk as a basis for considering how enterprises can immediately begin addressing this risk.

Do any of you remember way back in the early-mid 2000’s, when cloud computing became predominant and was on every organization’s Top Three Initiatives list? We remember. We remember warning organizations against the threat of “shadow IT” growing exponentially as divisions or departments within organizations were rushing to “get something on the cloud” that could be rolled out super quickly and relatively cheaply. In this “model”, it was all about time-to-market and the common refrain was “we will bolt on security as soon as possible – we just have to get this application out there, or else we’ll be replaced by someone who will!” Fear motivates.

We have been battling the repercussions of unbridled deployments at any “cost” to our fundamental well-being ever since. What are we saying? This: as a general principle, humans’ thirst for profits and fame overwhelmed common sense and we now find ourselves in a world where internet-born fraud is pretty much invincible. We are playing the ultimate “whack-a-mole” game, but it is not at the amusement park. It affects every one of us due to the very real threats of identity theft, financial fraud, influence operations, social engineering, unaccountability for one’s actions, and so on.

Now, however, we have AI large language models/large language processing, generative AI, Deep Learning and Self-aware AI – all of which are being incorporated into “agents” or bots. These agents or bots are merely names given to AI-immersive applications that can become exceedingly dangerous because we are not governing them properly before putting them into Production.

This has the very real potential to become the “shadow IT” of the Death Star. This is not gaslighting. This is not the Angel of Doom speaking. We have proven that as a species, we don’t really know how or want to know how to protect ourselves from the unintended consequences of our digital existence if such protection gets in the way of time-to-market…and the AI vendors with their exponential rise in market capitalization are focused on delivering game-changing technology—not the security controls and governance that will be necessary for wide-scale, enterprise adoption.

We are creating AI agents in our image – warts and all. But its worse than that, AI agents can harm us by:

- Requiring large amounts of data, which can raise privacy concerns.

- Being targets for bad actors if they are trained with proprietary data.

- Collaborating or colluding in harmful ways if agents are compromised in any number of ways, such as via influence operations and social engineering (just like we humans).

- Hallucinate and provide erroneous outputs due to the lack of enough reliable data to train AI systems, especially for complex tasks.

- To name just a few of the very real threats.

Examining AI Agent Risk

Agentic AI has the real potential to dramatically exacerbate organizations’ insider threat risk, because AI agents are “insiders”. Please let that sink in. AI agents as insider threats pose several risks, including technical, ethical, and socioeconomic risks.

Technical risks

- Errors and malfunctions: AI agents can make errors or malfunctions, which can lead to data breaches.

- Security issues: AI agents can be used to automate cyberattacks.

- Coding logic errors: AI agents can make unintended or malicious coding errors.

- Data poisoning: Malicious data can be injected into the training data, which can compromise the AI’s decision-making.

Ethical risks

- Decision-making and accountability: AI agents can make decisions that are not aligned with human values.

- Accountability: Who is responsible when an AI system makes a faulty decision that harms someone?

- Collusion: AI agents could work together in harmful ways.

- Bias: AI agents can amplify biases present in the data they are trained on.

Socioeconomic risks

- Job displacement: AI agents could automate tasks that humans currently perform, which could lead to job displacement.

- Over-reliance: People could become overly reliant on AI agents, which could lead to disempowerment, or more likely, abdication of responsibility.

While many, if not most, organizations have had evolving and growing risk management and Governance, Risk, and Compliance (GRC) initiatives in place for the past two decades, the advent of agentic AI risk focus is worth noting. This is because up until now, risk management has been focused on the notion of humans interacting with IT. As humans become less involved in the actual functioning of many organizational efficiency initiatives, there needs to be something tied to someone who is watching the crown jewels. Agentic AI, as we briefly noted above, has the potential to cause the organization much damage if not appropriately secured. If roughly 75% of all breaches today are human-induced, and we are rolling out agentic AI in our human image, it would be foolish to believe that agentic AI can be trusted any more than humans can.

This begs the question, “what can be done?” which we’ll discuss next.

AI Agent Threat Controls and Procedures Guidance

Today, “conventional wisdom” seems to indicate that in order to mitigate these risks and others, organizations can and should:

- Establish clear ethical guidelines,

- Prioritize data governance and cybersecurity,

- Educate the organization about AI agents,

- Improve the transparency of AI agents, and

- Implement “human-in-the-loop” oversight.

In this report, we’re going to focus on #2 and #4, primarily, and we will dive deeper into the other mitigation approaches in subsequent reports.

In April 2024, the National Security Agency’s Artificial Intelligence Security Center (NSA AISC) published the joint Cybersecurity Information Sheet Deploying AI Systems Securely in collaboration with the Cybersecurity and Infrastructure Security Agency (CISA), the Federal Bureau of Investigation (FBI), the Australian Signals Directorate’s Australian Cyber Security Centre (ASD ACSC), the Canadian Centre for Cyber Security (CCCS), the New Zealand National Cyber Security Centre (NCSC-NZ), and the United Kingdom’s National Cyber Security Centre (NCSC-UK).

The guidance provides best practices for deploying and operating externally developed artificial intelligence (AI) systems and aims to:

- Improve the confidentiality, integrity, and availability of AI systems.

- Ensure there are appropriate mitigations for known vulnerabilities in AI systems.

- Provide methodologies and controls to protect, detect, and respond to malicious activity against AI systems and related data and services.

According to CISA, there are three types of AI risk:

- Attacks that target AI systems

- Failures in the design and implementation of AI systems

- Attacks that use AI

CISA’s guidelines are comprised of four functions: Govern, Map, Measure, and Manage.

Govern

- As in the National Institute of Standards and Technology (NIST) cybersecurity framework, Govern sits at the center of the model, and is built on the foundation of a culture of AI risk management. The guidelines here support the establishment of policies, processes, and procedures that allow organizations to enjoy the benefits of AI while mitigating its risks. It follows a “secure by design” philosophy, where cybersecurity leaders build a culture in which security is a top priority.

- Among the different guidelines within Govern is the need to create a detailed plan for cybersecurity risk management, establish transparency in AI system use, and integrate AI threats, incidents, and failures into information-sharing mechanisms.

- Furthermore, organizations should establish roles and responsibilities with their AI vendors, invest in workforce training, and collaborate with industry groups or government agencies to stay on top of risk management tools and methodologies.

Map

- Mapping is key to understanding where and how AI systems are being used. The visibility into these systems allows security teams to assess, evaluate, and mitigate specific risks.

- The guidelines include documenting AI use cases, their risks, and mitigations, as well as conducting an impact assessment of the AI tool and the negative potential impact that could arise from the implementation.

- Security teams should also assess whether certain AI systems require human supervision to address any malfunctions or unintended consequences.

Measure

- Within this function, organizations are guided to develop systems capable of assessing, analyzing, and tracking AI risks. It asks security teams to identify repeatable methods that can monitor AI risks and impacts throughout the AI system lifecycle.

- As part of this function, security teams should define metrics for detecting and tracking known risks and incidents. Organizations should continuously test AI systems for errors and establish practices to prevent exposure of confidential information.

- AI systems should be developed and used with resilience in mind, which enables fast recovery from any type of disruption. Most importantly, teams should also establish processes for AI security reporting, to collect feedback from impacted stakeholders.

Manage

- The last of CISA’s guidelines covers the need to prioritize and act upon AI risks to safety and security. Organizations should establish and follow AI cybersecurity best practices, including the use of role-based access controls and logging all system use.

- Whenever possible, mitigations should be applied before systems or applications are deployed, and systems should be monitored for any kind of unusual or malicious activity behavior.

- When incidents arise, security teams and stakeholders should follow established incident response plans to restore and secure the AI system.

In keeping with the title (and thesis) of this TechVision Research report, we are now going to dive into how Identity and Access Management (IAM) should be a solid foundation for improving security and risk management of agentic AI consistent with the CISA guidelines.

IAM and Agentic AI Risk Management

In this section, we examine the intersection of agentic AI risk management and IAM by exploring the following contention:

KYA and IAM are two sides of the same “information protection coin”:

- We define KYA as the process of identifying, analyzing, and addressing the risks associated with agentic AI behavior as it relates to an organization’s information management, access, processes and procedures – just like we do with the workers.

- Identity and Access Management (IAM) has been a cornerstone of cybersecurity since the inception of modern computing – as well as today’s Zero Trust authentication and access control These bedrock principles apply to agentic AI every bit as much as they apply to human workers.

Agentic AI design and deployment governance must therefore have direct interaction with the enterprise IAM system(s) to Know Your Agentic AI. This IAM visibility includes accurately and temporally facilitating access to sensitive information and configuration capabilities. For example, an AI agent designed to analyze financial market data might need access to a specific database within a company’s system. IAM systems need to be able to identify and authenticate AI agents, which can be complex due to their dynamic nature and potential for rapid evolution.

In the above context, IAM would define the agent’s unique identity and grant it the necessary permissions to access the data while restricting access to other sensitive areas. This simple example should illustrate how nature IAM can lessen your risks associated with agentic AI error or malfeasance. With this as a backdrop, let’s look at IAM now in a little more detail—we’ll start with contextual IAM.

A Contextual IAM Overview

The first word in IAM is identity. An “identity” is a label applied to real world objects – most often humans but also includes Internet of Things (IoT) devices, services, applications and more specifically in our current context – AI agents.

IAM is the process of establishing identities, managing identities/attributes and programmatic access to enterprise resources through these identities. IAM technologies are relatively mature today, but there are still many ongoing developments to improve the technology landscape. This is mainly because identities and information associated with those identities (context) are growing exponentially and this will continue for many years. It is important to note that many organizations today still struggle with inconsistent directories, multiple authoritative sources, lack of internal standards, and scalability challenges. So, while IAM technology has been around for 30+ years, it is still subject to inadequate design and implementation.

Nevertheless, there has been an increased understanding that

IAM is key to cyber security, privacy, personalization, data sharing, application integration, and achieving Internet-scale digitization.

This is particularly important as we consider how to mitigate agentic AI-based insider threats via an IAM-based KYA program.

For a very brief rundown, here are some of the most important aspects of today’s enterprise IAM environments:

- Identity Registration

- Lifecycle Management

- Authentication

- Access Control

- Monitoring, Threat Detection and Remediation

We describe these below.

Registration

AI agent registration before release into Production is critical. In this process, a reasonable amount of information about the agent, such as the human owner of the agent, its purpose, its data and access requirements, its time-to-live and other key characteristics need to be captured, stored and maintained within the IAM subsystem.

The level of access to sensitive information resources should dictate the level of registration information required – just like with people. The enterprise needs to know what functions this particular agent can perform within the context of the enterprise’s data and systems, including system configuration.

Lifecycle Management

The lifecycle of the agent must be managed closely as the functionality and “awareness” of the AI agent will likely change over time. This requires that agent identities be audited, and access rights certified in perpetuity. This key requirement results in a very important set of IAM services known as Identity Governance and Administration (IGA) that has been traditionally oriented toward the workers (people) of the organization. Now, this lifecycle management process must include registration and re-certification of agentic AI.

IGA helps detect and mitigate accumulation of privilege, which is a tremendous risk to many organizations who let workers accumulate access rights for the duration of their employment, often not in concert with the workers’ current job requirements. This may also happen with AI agents as they “mature” and adapt through self-learning/machine learning, etc.

Identity lifecycle management and IGA helps enforce separation of duties and enable strong Privileged Access Management (PAM) to limit functions systems agentic AI administrators may perform. Agentic AI administrators already exist. The train is leaving the station at breakneck speed. PAM needs to be extrinsically integrated with AI agents that can perform any administrative or sensitive tasks anywhere in the IT infrastructure – on-premises and in-cloud.

Agent Authentication

Agentic AI authentication is how an agent identifies itself unambiguously to the enterprise cybernetic systems. A mature IAM implementation requires agentic AI to authenticate using methods commensurate with the risk of information loss, including a combination of factors like unique identifiers, cryptographic keys, and behavioral analysis – rather than relying solely on traditional user credentials like passwords.

Furthermore, as we detailed in our recent report titled “Personhood Credentials: An Emerging Solution to AI Deception”, the rise of AI-generated deception, including deepfakes and synthetic personas, presents significant challenges in maintaining trust and authenticity in digital environments. TechVision advises that Personhood Credentials (PHCs) offer a novel solution by providing cryptographic proof that an individual is human, without revealing personal information. Unlike traditional identity systems, PHCs focus solely on verifying humanness, using privacy-preserving techniques like zero-knowledge proofs. These credentials can be issued by various entities such as governments or tech companies and are designed to combat AI-driven fraud, misinformation, and manipulation. With their decentralized issuance model and privacy-preserving features, PHCs provide a robust defense against large-scale manipulation while maintaining user anonymity. As AI technologies continue to evolve, PHCs represent a simple yet critical tool for safeguarding online trust and protecting against the misuse of AI at scale. We strongly encourage organizations to invest in approaches to authentication such as this so that they can minimize, if not eliminate AI-centric fraud emanating from their own agentic AI.

Access Control

IAM / access control systems must enforce least privilege access and prevent unauthorized actions by AI agents. This functionality should be used to maintain blacklists of threat indicators and files that AI agents are disallowed from accessing. A continuous monitoring and feedback loop should be established to identify and correct any unwanted actions resulting from AI agent inaccuracies.

Monitoring, Threat Detection and Remediation

The IAM environment must proactively monitor all authentication and access activity and enforce user entity behavior analytics (UEBA), risk scoring, and other effective run-time (proactive) safeguards to ensure an agent isn’t erroneously or malevolently accessing/retrieving sensitive information or changing system configurations.

Baseline behaviors should be established to identify outlier transactions, which can then be addressed through automatic real-time remediation. These real-time monitoring capabilities can alert IAM to automatically suspend and remediate rogue transactions while forwarding any unresolved issues to human operators for manual review.

These are just a few of the many capabilities that an IAM infrastructure provides to the enterprise in terms of cybersecurity. These capabilities must be extended immediately to support agentic AI in the context of providing services and protecting the organization. In fact, IAM is the cornerstone of the Zero Trust Architecture movement that we will discuss next.

Agent Risk, IAM and Zero Trust

TechVision Research has published several reports focusing on Zero Trust networking and enablement. Our position is that IAM is the essential underlying infrastructure that enables the adoption of zero trust architecture. The National Institute of Standards and Technology (NIST) describes Zero Trust Architecture as “an end-to-end approach to network/data security that encompasses identity, credentials, access management, operations, endpoints, hosting environments, and the interconnecting infrastructure.”

What this definition implies is that an enterprise should only trust someone or something that is granted and reestablished/verified through Identity and Access Management (IAM) services designed to:

- Provide proper controls to securely onboard, manage, and offboard agent identities,

- Enable sufficient authentication and authorization mechanisms as per enterprise risk management, and

- Provide an extensive proactive alerting and reactive audit trail of all agentic AI access to the enterprise resources.

Because Zero Trust is not a product or even a prescribed implementation strategy (despite some vendors’ insistence), it makes sense that the decisions made about solving for Zero Trust across the enterprise demonstrate the consistent application of the following capabilities:

- Least Privilege – An agent is granted the appropriate access and entitlements for a resource based on the need to perform its intended function and only during the time the function is being performed.

- Strong Verification – Move beyond passwords! into advanced methods of authentication and practice progressive collection and disposal of credentials required to achieve least-privilege functional execution.

- Risk-based enforcement – Evolve to decision making based on factors beyond a strongly verified agent identity. Factors such as resource value, location, device and network security postures, and agent/user behavior are included in access/entitlement decisions in a least privilege regime.

- Continuous Evaluation of Assurance – Identify and assess levels of risk to the achievement of business objectives. Considers a combination of monitoring and auditing capabilities, such as:

- Analyzing trends

- Correlating outliers

- Highlighting potential exposures

- Evaluating and remediating exposures

- Continuous Evaluation of Entitlement – Monitor and review the application of policies that grant, resolve, enforce, revoke, and administer fine-grained access entitlements to agentic AI (and human workers!) for resources.

Each of these capabilities identified above for Zero Trust should be extended to agentic AI deployment.

Remember, Zero Trust is about always asking if this activity/action is “appropriate” – during runtime.

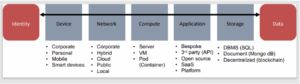

To determine that level of appropriateness, you need to consider the risk, the activity, and the identity and the associated credentials to determine authentication, access, and entitlements. This means that the “identity is the perimeter” and it is the one piece of the puzzle that must be secured with the utmost care to preserve the integrity of the information ecosystem as the agentic AI “identity” traverses from the device, over the network to the actual data, as illustrated below.

Figure 1: Identity Based Zero Trust Applies to Agentic AI

So, what does the current vendor and solution landscape for agentic AI risk management look like? This we discuss next.

Vendors Taking Notice

While the world races ahead (with reckless abandon?) and deploys agentic AI as quickly as possible, cybersecurity and IAM vendors are scrambling to catch up. One example of how an IAM vendor (Ping Identity) is rapidly ratcheting up agentic AI security support is through their Helix-branded IAM suite that has been enabled to configure AI agents with their own identities. As Ping describes, “these agents are more than just automated processes; they are sophisticated entities capable of understanding their operational contexts that interact securely with users. By endowing AI agents with distinct identities, Helix ensures that every interaction is authenticated and authorized in real-time, creating a new level of trust and security in AI operations.”

Other prominent IAM vendors that support AI agents include Okta, OneLogin, CyberArk, IBM, and BeyondTrust; with OneLogin being particularly recognized for its AI-backed adaptive authentication features and capabilities for managing access to applications with AI-driven decision making. Subsequent research by TechVision will investigate in more detail what other similar vendors are doing to bring their IAM (and IGA) solutions up to “agentic AI standards”.

Additionally, we will look at some of the startup companies coming onto market with specific focus on agentic AI risk management (e.g., lumenova.ai), as these types of solutions will need to integrate with and leverage the IAM / IGA infrastructure to work reliably.

In the following section, we’ll provide some guidance on how to address AI agent risk for your organization.

What To Do

Building a comprehensive understanding of the risks agentic AI systems inspire is challenging in light of agentic AI technology’s relative novelty, versatility, and customizability. Consequently, deconstructing the AI agent risk repertoire necessitates broad consideration of many critical factors. These factors include things like:

- The intended use and purpose of the agent, the real-world objectives or tasks for which it’s leveraged

- The rate at which it adapts to and/or learns from new experiences or data

- Its respective capabilities and limitations

- The degree of involvement in consequential decision-making procedures

- How well it integrates with existing digital tools and infrastructures

- The concrete skills the organization requires to utilize agentic AI responsibly and effectively (ai/blog/ai-agents-potential-risks/)

These sorts of factors help your organization govern the agentic AI in concert with your IAM, IGA, and risk management (Governance, Risk and Compliance – GRC) solutions already in place. The first thing to do though, is discover what agents you may already have running in your distributed IT environment.

Discover

There are solutions like IGA and PAM tools that help organizations identify “who has access to what” in accordance with baseline risk management. These tools can help locate “what has access to what”, as well. In addition, there are startups and small companies, one being Nudge Security that are focusing specifically on detecting agentic AI across the organization. For instance, their solution is focused specifically on discovering GenAI and AI-powered apps, accounts, and authentication methods—including the ones network monitoring and app integrations miss—to help create and maintain a complete and continuous inventory. Their tool also helps you identify which third-party apps may share your data with AI, and inspects the organization’s SaaS software supply chain to gain visibility into what AI is used in your SaaS apps.

Govern

Remember that the emerging “agentic AI workforce” is not going to be onboarded through Human Resources or Contractor Management platforms, like human workers typically are vetted and handled. This fact uncovers a significant set of modifications required to integrate more stringently with the organizational Secure Application Development processes. The hype and rush-to-market of agentic AI is already leading to a rash of shadow IT instances centered on agentic AI rollout. The danger of not governing this level of “shoot first, ask questions later” deployment needs to be obvious. What is even more concerning – as if that were possible, is that your vendors, partners and managed service providers are deploying agentic AI, too. The immense challenges we have been faced with over the last 20 years in managing risk associated with information access is now even more pronounced and problematic. In many cases today, organizations do not have a reasonable control architecture and process set to handle the workers coming and going into these third-party IT environments, all of whom are being granted some level of access to your data to perform their functions. Now consider agentic AI in there, as well.

It should be no surprise that you must turn your attention to the very real threats posed by their (the third party’s) own non-human workforce. This is done by identifying their high consequence information protection risks and subsequently increasing focus on improving personnel, technical and procedural controls for key individuals who have access to high consequence information and systems. This requires an actionable organizational, technical control architecture and process improvements to help reduce the potential damage (i.e., “blast radius”) to the organization if (or when) an agent makes a mistake or goes rogue.

Remember, you cannot protect what you do not know.

Conclusions and Recommendations

Many, if not all, enterprises today have some IAM infrastructure and are likely embarking on a Zero Trust strategy that leverages the existing IAM (and IGA / PAM) foundation. We strongly recommend that you Know Your Agentic AI in much the same way as the global financial services community has determined they Know Your Customer.

You must ensure your technical controls are reasonable and prudent regarding the threats, vulnerabilities, and potential consequences your risk appetite avails. Indeed, a balance must be struck between KYA and IAM to best ensure your potential breaches will be identified and mitigated before they happen. There are many advancements in the IAM capabilities of IGA, PAM, UEBA, risk scoring, trust scoring and emerging agentic AI security that can provide most organizations with the technology needed to reduce agentic AI risk. Do not delay getting your arms around this potentially crushing risk that is already alive, well and hungry.

Lastly, this report acts as a foundational document for our deeper understanding of agentic AI risk. In the near future, TechVision Research will drill down into the IAM, IGA, PAM and all related capabilities in more detail to help you gain a clear understanding of how a well-defined, deployed and managed IAM infrastructure reduces agentic AI risk significantly.

Action Plan

Those organizations that are utmost prepared to protect their broad range of critical information assets in a secure-but-user-friendly ecosystem will be able to identify and mitigate agentic AI risk. They will do this by developing a comprehensive IAM architecture and deployment strategy that addresses the entire agentic AI landscape at work or emerging within the organization. TechVision recommends a structured approach to identifying key agentic AI risks across this spectrum and has developed a Reference Architecture that is very useful in evaluating the set of capabilities necessary for your future state KYA foundation. With over 30 years of helping our customers identify and mitigate these risks, TechVision can help with this process.

About TechVision

World-class research requires world-class consulting analysts, and our team is just that. Gaining value from research also means having access to research. All TechVision Research licenses are enterprise licenses; this means everyone that needs access to content can have access to content. We know major technology initiatives involve many different skillsets across an organization and limiting content to a few can compromise the effectiveness of the team and the success of the initiative. Our research leverages our team’s in-depth knowledge as well as their real-world consulting experience. We combine great analyst skills with real world client experiences to provide a deep and balanced perspective.

TechVision Consulting builds off our research with specific projects to help organizations better understand, architect, select, build, and deploy infrastructure technologies. Our well-rounded experience and strong analytical skills help us separate the “hype” from the reality. This provides organizations with a deeper understanding of the full scope of vendor capabilities, product life cycles, and a basis for making more informed decisions. We also support vendors in areas such as product and strategy reviews and assessments, requirement analysis, target market assessment, technology trend analysis, go-to-market plan assessment, and gap analysis.

TechVision will provide regular updates on the latest developments with respect to the issues addressed in this report.

About the Authors

Doug Simmons brings more than 30 years of experience in IT security, risk management and identity and access management (IAM). He focuses on IT security, risk management and IAM. Doug holds a double major in Computer Science and Business Administration.

Doug Simmons brings more than 30 years of experience in IT security, risk management and identity and access management (IAM). He focuses on IT security, risk management and IAM. Doug holds a double major in Computer Science and Business Administration.

While leading consulting at Burton Group for 10 years and security, and identity management consulting at Gartner for 5 years, Doug has performed hundreds of engagements for large enterprise clients in multiple vertical industries including financial services, health care, higher education, federal and state government, manufacturing, aerospace, energy, utilities and critical infrastructure.

Gary Rowe is a seasoned technology analyst, consultant, advisor, executive and entrepreneur. Mr. Rowe helped architect, build and sell two companies and has been on the forefront the standardization and business application of core infrastructure technologies over the past 35 years. Core areas of focus include identity and access management, blockchain, Internet of Things, cloud computing, security/risk management, privacy, innovation, AI, new IT business models and organizational strategies.

Gary Rowe is a seasoned technology analyst, consultant, advisor, executive and entrepreneur. Mr. Rowe helped architect, build and sell two companies and has been on the forefront the standardization and business application of core infrastructure technologies over the past 35 years. Core areas of focus include identity and access management, blockchain, Internet of Things, cloud computing, security/risk management, privacy, innovation, AI, new IT business models and organizational strategies.

He was President of Burton Group from 1999 to 2010, the leading technology infrastructure research and consulting firm. Mr. Rowe grew Burton to over $30+ million in revenue on a self-funded basis, sold Burton to Gartner in 2010 and supported the acquisition as Burton President at Gartner.