Data-Centric Security Playbook

Practical Implementation for CISOs and Chief Architects

EXECUTIVE CARD: The “Why” & “What”

The Outcome Statement

Reduce data breach impact by 80% and enable secure collaboration at scale by moving access decisions from the perimeter to the data itself, while accelerating time-to-value for AI and cloud initiatives.

The Shift: Old Way vs. New Way

| Perimeter-Centric (Legacy) | Data-Centric (2026) |

| Assume trust inside the network; defend the edge | Assume zero trust; verify access at the data layer |

| Data protection happens after access is granted | Data protection is the access control mechanism |

| Centralized control; rigid policies | Distributed enforcement; context-aware, dynamic policies |

| Data classified manually; inconsistently labeled | Automated discovery and classification; persistent labeling |

| Breach = full data exposure (encryption afterthought) | Breach = limited exposure (encryption is foundational) |

| Compliance audited post-incident | Compliance demonstrated continuously via audit trails |

| Identity = who you are; Data access = role-based | Identity = who/what you are; Data access = data-context-aware |

| AI/ML gets broad data access (high risk) | AI/ML gets scoped, logged, governed data access (safe) |

Board Narrative (3-Minute Talking Track)

Opening:

“Our traditional security model assumes the network perimeter is our defense. But today, data lives everywhere—cloud, on-premises, in agents’ hands—and that perimeter is gone. Cybercriminals aren’t attacking our firewalls anymore; they’re targeting data directly. We need to shift our defense to the data itself.”

The Risk:

“Right now, if someone breaches our network, they can access almost anything once inside. With AI and cloud adoption accelerating, we’re actually expanding data access without corresponding controls. That’s a recipe for a 7-figure breach, and we won’t find out for months.”

The Solution:

“Data-centric security means encrypting data at the source, controlling access at the data layer (not just the network layer), and monitoring every access. Even if someone compromises a user account, the data itself remains protected. This directly reduces breach impact and accelerates our regulatory compliance.”

The Business Case:

“We’ll achieve this through: (1) automated data discovery and classification, (2) encryption and policy-driven access control, and (3) continuous monitoring tied to our SOC. Cost: ~$X over 18 months; ROI: breach reduction, faster incident recovery, and 30% faster AI/ML time-to-market because we can safely share governed data.”

THE ORGANIZATIONAL MODEL: The “Who”

RACI Matrix: Data-Centric Security Responsibilities

| Activity / Decision | CISO | Chief Architect | Data Owner | IAM/PAM Team | Security Operations (SOC) | Compliance |

| Data Discovery & Classification | C | R | A | C | C | C |

| Data Risk Assessment | A | R | R | C | C | A |

| Encryption Key Management | C | R | A | R | C | C |

| Access Policy Design | R | A | C | R | C | C |

| Monitoring & Auditing | C | C | C | C | R | A |

| Incident Response | A | C | C | C | R | C |

| Vendor/Third-Party Risk | A | C | C | C | C | R |

| Training & Awareness | R | C | A | C | C | C |

| Governance & Policy | A | C | R | C | C | A |

| Budget & Resource Planning | A | R | C | C | C | C |

Legend: R = Responsible (does the work), A = Accountable (final decision), C = Consulted (input sought), I = Informed (kept in loop)

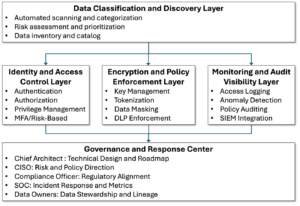

Target Operating Model (TOM) Diagram

Key Integration Points:

- IAM → Encryption Layer: Every authenticated access triggers policy evaluation

- Monitoring → IAM: Anomalies trigger re-authentication or access revocation

- Data Catalog → Encryption: Classification determines key assignment and rotation schedules

- SOC → All Layers: Centralized dashboarding for cross-layer threat correlation

THE ARCHITECTURAL CORE: The “How” (Design)

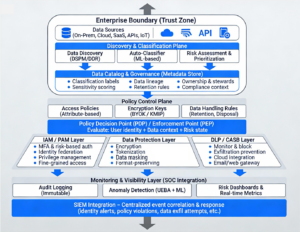

Reference Architecture: Data-Centric Security (Logical View)

Control Planes Annotated:

- Discovery & Classification — What data do we have, and what are its rules?

- Policy Control — Who can access what, and is it encrypted?

- Monitoring & Visibility — What’s happening, and what do we do about it?

Capability Map: Technical Functions Required

Tier 1 (Foundation — Months 1-3):

- ☐ Data discovery & inventory across on-premises, cloud, SaaS

- ☐ Automated data classification (PII, financial, IP, health)

- ☐ Encryption key provisioning (at-rest, in-transit)

- ☐ Basic RBAC & MFA implementation

- ☐ Audit logging and retention (6+ months)

Tier 2 (Core — Months 3-12):

- ☐ Policy-driven access control (ABAC)

- ☐ Data masking & tokenization for high-risk data

- ☐ DLP endpoint agents and network monitoring

- ☐ CASB integration for cloud app monitoring

- ☐ SIEM integration and alerting

- ☐ Incident response automation (block, quarantine, notify)

Tier 3 (Advanced — Months 12+):

- ☐ Anomaly detection (UEBA, behavioral analytics)

- ☐ Real-time data lineage and impact analysis

- ☐ AI/ML-powered risk scoring and access prediction

- ☐ Continuous compliance dashboards (GDPR, HIPAA, etc.)

- ☐ Autonomous response (revoke access, trigger re-auth, escalate)

- ☐ Advanced encryption patterns (homomorphic, zero-knowledge)

Design Patterns: Control and Data Flow

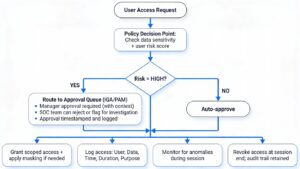

Pattern 1: The “Human-in-the-Loop” Pattern

For high-sensitivity data and high-risk access requests

Use Case: Executive accessing customer PII, third-party vendor access to source code, new contractor accessing financial data.

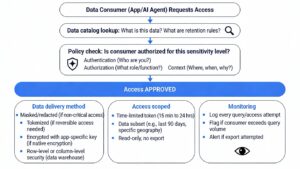

Pattern 2: The “Data-as-a-Service” (Governed Sharing) Pattern

For controlled data sharing with internal teams and AI/ML systems

Use Case: Marketing analytics team accessing masked customer profiles, ML training pipeline accessing de-identified health data, BI tool pulling sales data for dashboards.

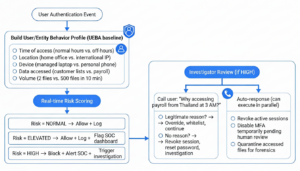

Pattern 3: The “Insider Threat Detection” Pattern

For continuous monitoring and anomaly-driven response

Use Case: Departing employee suddenly downloading company source code, contractor accessing data outside contract scope, compromised account exhibiting abnormal behavior.

THE EXECUTION ROADMAP: The “When”

Maturity Model: Crawl → Walk → Run

CRAWL Phase (0–3 months): Foundational Visibility

Objective: Get visibility into what data you have and where it lives.

| Activity | Owner | Deliverable | Success Metrics |

| Data Discovery Sprint | Chief Architect + DSPM vendor | Inventory of 80%+ of sensitive data across on-prem, cloud, SaaS | Asset count, coverage %, top 10 sensitive data types identified |

| Classification Framework | Data Owners + Compliance | Defined taxonomy (e.g., Public, Internal, Confidential, Restricted) | Classification schema document, examples per level, ownership matrix |

| Baseline Encryption | Security Ops | Encryption enabled for databases, file shares, backup systems | % of critical data encrypted, key provisioning process live |

| Audit Logging | SOC | Central log aggregation (SIEM) capturing access to top 20 datasets | Log retention enabled, no gaps, searchable within 24 hrs |

| Policy Skeleton | CISO + Compliance | Data classification, access control, incident response policies (draft) | Policies approved by leadership, ready for communication |

Milestones:

- Week 2: Data discovery tool deployed, scanning active

- Week 4: 80%+ coverage achieved; top 100 sensitive datasets tagged

- Week 8: Encryption baseline established; key rotation process live

- Week 12: Audit logging integrated with SIEM; policies drafted & approved

ROI Indicator: Reduce time-to-detect data exfiltration from weeks to hours.

WALK Phase (3–12 months): Policy Automation & Integration

Objective: Automate data protection; integrate across security stack.

| Activity | Owner | Deliverable | Success Metrics |

| Policy-Driven Access Control | IAM/PAM Team | ABAC policies live; MFA + risk-based decisions on data access | % of data access decisions made by policy engine (target: 70%+) |

| Data Masking & Tokenization | Security Ops | Masking rules deployed for PII in dev/test; tokenization for payment data | % of sensitive data masked in non-prod (target: 95%+) |

| DLP Agents & Monitoring | SOC | Endpoint DLP, email/web gateway integration, file-share monitoring | # of policy violations detected/blocked per week; false positive rate <5% |

| CASB Deployment | Cloud Architect | Cloud app discovery; sanctioned/unsanctioned app classification | # of cloud apps discovered, risk assessment complete |

| Incident Response Automation | SOC | Playbook for data exfil: auto-block, notify, escalate | Time-to-respond for high-risk incidents (target: <15 min) |

| SIEM Integration | SOC | Cross-system alert correlation; dashboard showing data access patterns | Mean time to detect anomalies (target: <5 min) |

Milestones:

- Month 3: ABAC policies for 50%+ of critical data; MFA enforced

- Month 6: Masking rules live for PII; DLP blocking demo

- Month 9: SIEM dashboard operational; incident playbooks tested

- Month 12: 90%+ of data access governed by policy

ROI Indicator: Reduce breach recovery time by 50%; demonstrate compliance in audits without manual evidence gathering.

RUN Phase (12+ months): Autonomous, Adaptive Security

Objective: Continuous, AI-driven risk management with minimal manual intervention.

| Activity | Owner | Deliverable | Success Metrics |

| Anomaly Detection at Scale | SOC + Data Science | UEBA deployed; behavioral baselines updated weekly | # of false-positive alerts (target: <2%); detection accuracy >90% |

| Real-time Data Lineage | Data Architects | Impact analysis: “If I revoke access to User X, which reports break?” | Query response time <2 sec; lineage accuracy >95% |

| Autonomous Response | SOC | Auto-revoke, auto-re-auth, auto-escalate for high-risk events | % of incidents auto-remediated (target: 40%+) |

| Continuous Compliance | Compliance | Automated compliance dashboards (GDPR, HIPAA, SOC 2); audit trail integrity proven | # of manual audit tasks eliminated (target: 60%+); audit completion time <2 weeks |

| AI/ML Data Access | Cloud/Data Architects | ML pipelines access only de-identified, tokenized, scoped data; audit trail complete | % of ML training data governed (target: 100%); breach risk: zero incidents |

| Board Metrics | CISO + CFO | Quarterly KPI dashboard: breach impact, detection time, compliance posture, cost savings | Articulate ROI to business (e.g., “Prevented $X breach via early detection”) |

Milestones:

- Month 13: UEBA baseline stable; 80%+ of alerts auto-triaged

- Month 18: Data lineage queries live; >50% of incidents auto-remediated

- Month 24: Continuous compliance reporting reduces audit effort by 70%

ROI Indicator: Move from “response to breaches” to “prediction and prevention.”

The “First 90 Days” Checklist

WEEK 1-2: Establish Governance & Kickoff

- ☐ Kick off executive steering committee (monthly cadence)

- ☐ Assign RACI roles; confirm budget & headcount

- ☐ Communicate strategy & roadmap to board

- ☐ Publish “Data Classification 101” training module

- ☐ Select DSPM/discovery tool vendor (RFP → LOI)

WEEK 3-4: Launch Discovery & Assessment

- ☐ Deploy data discovery tool; configure scanners

- ☐ Conduct data security risk assessment (confirm priority datasets)

- ☐ Map existing encryption, DLP, CASB capabilities

- ☐ Audit compliance gaps (GDPR, HIPAA, etc.)

- ☐ Draft data classification taxonomy; socialize with stakeholders

WEEK 5-8: Quick Wins & Foundation

- ☐ Classify top 20 datasets; apply labels

- ☐ Enable encryption for top 10 at-risk datasets

- ☐ Activate audit logging to SIEM (test connectivity)

- ☐ Conduct “data owner” interviews; assign stewards

- ☐ Develop & approve formal policies (Exec sign-off)

WEEK 9-12: Integration & Pilots

- ☐ Pilot IAM integration: 50 high-value users, policy-driven access

- ☐ Deploy DLP agent to 20% of workforce; monitor false positives

- ☐ Stand up SOC dashboard; daily data-security metrics review

- ☐ Run tabletop incident response exercise

- ☐ Plan Phase 2 (ABAC rollout, masking, UEBA)

Success Criteria (End of Week 12):

- ✓ 80%+ of sensitive data discovered & classified

- ✓ Encryption enabled for top 10 datasets

- ✓ Audit trail flowing to SIEM without gaps

- ✓ Executive awareness & board confidence in roadmap

- ✓ Policies approved; employees understand data handling expectations

THE PRACTITIONER’S TOOLKIT: The “Use Now” Artifacts

1. Sample Data Classification Policy Statement

POLICY: DATA CLASSIFICATION AND HANDLING STANDARD

Purpose: Establish consistent data handling practices based on sensitivity.

CLASSIFICATION LEVELS:

[PUBLIC] – Information that can be freely shared externally

- Approved marketing materials, published documents

- No encryption required

- No access control needed

- Shareable via email, unencrypted file transfer

- Examples: Press releases, blog posts, public documentation

[INTERNAL] – Information for internal use only

- Organizational policies, employee handbooks, internal communications

- Encryption at-rest recommended

- Basic access control (employee-only)

- Shareable via secure channels (VPN, corporate email)

- Examples: Internal wikis, org charts, non-sensitive memos

[CONFIDENTIAL] – Sensitive data requiring protection

- Customer PII (email, phone, address); financial records; contract details

- Encryption REQUIRED (both at-rest and in-transit)

- ABAC: Need-to-know access; MFA required

- Authorized channels: Secure file transfer (SFTP), encrypted email, cloud sync with DLP

- Log & audit: All access logged; violations require investigation

- Examples: Customer database, payroll, source code, legal documents

[RESTRICTED] – Highly sensitive; minimal access

- Executive financial data, M&A details, personal health info, regulatory secrets

- Encryption REQUIRED + tokenization for subset

- ABAC: Executive approval + MFA + context-aware (location, device)

- Authorized channels: Air-gapped systems or high-assurance channels only

- Log & audit: Real-time alerting; access reviewed daily

- Examples: Board financials, acquisition targets, sensitive health records

RESPONSIBILITIES:

- Data Owner: Assign classification; define access criteria

- Data Steward: Enforce handling per classification

- User: Follow procedures; report mishandling

- Audit: Verify compliance quarterly

VIOLATIONS:

- Unencrypted RESTRICTED/CONFIDENTIAL data in email: Block + investigate

- Unauthorized access: Revoke access + incident report

- Policy override without approval: Escalate to CISO

2. Threat Assessment & Data Risk Scoring Template

Purpose: Prioritize data protection based on criticality and risk.

| Data Asset | Classification | Volume (GB) | # Users | Threat Level | Compliance Impact | Risk Score | Priority |

| Customer PII Database | CONFIDENTIAL | 500 | 150 | HIGH | GDPR/CCPA | 9/10 | 🔴 P1 |

| Source Code Repository | CONFIDENTIAL | 50 | 80 | HIGH | IP protection | 8/10 | 🔴 P1 |

| Sales Forecasts | INTERNAL | 10 | 30 | MEDIUM | Competitive | 5/10 | 🟡 P2 |

| Marketing Assets | PUBLIC | 200 | 1000 | LOW | None | 2/10 | 🟢 P3 |

| Executive Financials | RESTRICTED | 1 | 5 | CRITICAL | SOX/audit | 10/10 | 🔴 P1 |

| Employee Health Records | RESTRICTED | 5 | 5 (HR) | HIGH | HIPAA | 9/10 | 🔴 P1 |

| Vendor Contracts | CONFIDENTIAL | 20 | 50 | MEDIUM | Legal/IP | 6/10 | 🟡 P2 |

| Product Roadmap | CONFIDENTIAL | 5 | 20 | HIGH | Competitive | 8/10 | 🔴 P1 |

Risk Scoring Calculation:

- Risk = (Threat Level × 3) + (Compliance Impact × 3) + (User Exposure × 2) + (Data Sensitivity × 2)÷ 10

- Scores 8-10: P1 (Immediate action — encrypt, audit, monitor)

- Scores 5-7: P2 (Urgent — deploy controls within 3 months)

- Scores <5: P3 (Plan controls, not emergency)

3. RFP/RFI Requirements: Vendor Capability Evaluation

When evaluating DSPM, DLP, encryption, or policy-enforcement tools, ask:

Functional Requirements:

- ☐ Does it auto-discover unstructured data (files, logs) AND structured data (databases)?

- ☐ Can it classify by pattern AND by policy (e.g., “PII = SSN format OR labeled as such”)?

- ☐ Does it support our top N data sources (SAP, Salesforce, SharePoint, S3, Snowflake)?

- ☐ Can it enforce policies in real-time (block/redact before user sees data)?

- ☐ Does it log who accessed what, when, how, and why?

- ☐ Can it integrate with our IAM (Okta, Entra ID) for attribute-based policy decisions?

- ☐ Does it support our encryption key management (KMIP, BYOK, or native KMS)?

Integration & Automation:

- ☐ Does it have API access for custom integrations?

- ☐ Can it feed alerts to our SIEM (Splunk, Sentinel, ArcSight)?

- ☐ Does it trigger playbooks in our SOC tooling (ServiceNow, Demisto)?

- ☐ Can it auto-remediate (revoke, mask, quarantine)?

- ☐ Does it support orchestration with IAM/PAM (SailPoint, CyberArk)?

Scalability & Performance:

- ☐ How many endpoints/APIs can it monitor concurrently?

- ☐ What’s the latency for policy evaluation (target: <100ms for access decisions)?

- ☐ How much historical data can it retain (target: 1+ years audit logs)?

- ☐ Can it scale to multi-cloud (AWS, Azure, GCP, on-prem)?

Compliance & Auditability:

- ☐ Can it demonstrate compliance with GDPR, HIPAA, SOC 2?

- ☐ Does it provide reports for auditors (no manual work)?

- ☐ Can it prove data lineage (where did this PII come from?)?

- ☐ Does it support data retention schedules and automated disposal?

Cost & Support:

- ☐ What’s the TCO over 3 years (licensing + deployment + staffing)?

- ☐ Is there a consumption-based model (pay per GB scanned) or fixed?

- ☐ What’s the SLA for support and incident response?

- ☐ Do they offer training, implementation, and managed services?

4. Access Risk Classification: Who Gets What Data?

Use this matrix to codify access patterns; feed into policy engine:

| Role / Function | Normal Data Access | High-Risk Indicators | Control Type | Approval |

| Sales Rep | Customer names, contact, opportunity status | Accessing salary data, source code, competitor info | Auto-approve with masking (hide cost) | None |

| Data Analyst | De-identified customer behavior, aggregated metrics | Raw PII, payment records, executive data | Require explicit approval + context | Manager |

| Finance Manager | Payroll, budgets, expenses for their cost center | Accessing other departments’ payroll, exec compensation | Auto-approve within scope; block cross-department | None |

| New Contractor | Assigned project scope only; limited to first 30 days | Any data outside project scope, after 30 days | Require manager approval + time-bounded token | Manager + CISO |

| Executive (C-Suite) | Full financials, strategic data, board materials | All access granted with audit trail, no masking | Auto-approve + real-time alerting | None (logged) |

| 3rd-Party Vendor | API access to specific data subset for integration | Interactive access, export, access outside contract | Require API key + scoped entitlements, no GUI | Procurement + CISO |

| SOC Analyst | Audit logs, access patterns, security events | Customer PII, proprietary data outside investigation scope | Auto-approve investigation scope; block others | Incident lead |

Implementation:

- Translate into ABAC policies: (Role=DataAnalyst AND DataType=De-Identified AND Department=Own) → ALLOW with masking

- For high-risk indicators, trigger human review or block outright.

5. KPI Dashboard Mockup (Quarterly Board View)

Metrics Definition:

| Metric | Calculation | Target | Owner | Cadence |

| % Data Classified | (Classified / Total) × 100 | 90%+ | Data Architect | Monthly |

| Policy Compliance Rate | (Compliant accesses / Total) × 100 | 95%+ | CISO | Daily |

| Mean Time to Detect (MTTD) | Avg. time from incident start to detection | <5 min | SOC | Daily |

| Mean Time to Respond (MTTR) | Avg. time from detection to containment | <15 min | SOC | Daily |

| Breach Impact (if any) | $ cost of breach / data exposed | Minimize | CISO | Per incident |

| ROI on Program | (Cost avoided – Investment) / Investment | >3.0x | CFO | Quarterly |

| Training Completion Rate | (Trained users / Total employees) × 100 | 80%+ | HR/Security | Quarterly |

APPENDIX: Execution Toolkit

A. Sample Incident Response Playbook for Data Exfiltration

PLAYBOOK: High-Risk Data Exfiltration Attempt

TRIGGER:

- DLP detects >100 files marked CONFIDENTIAL being transferred to personal email or cloud (Dropbox, iCloud)

- UEBA flags access from unauthorized location with bulk download

- SOC receives alert: “User accessing 500+ customer records in <5 min”

IMMEDIATE ACTIONS (Auto + Manual, <5 min):

- SOC tool auto-blocks the transfer (DLP, CASB)

- Incident created in ServiceNow with context (user, data, time, method)

- Alert sent to on-call SOC lead + manager of user

- Session logged; IP geolocation captured

- Real-time timeline built: “User accessed X, then Y, then Z”

INVESTIGATION (5–30 min):

- SOC calls user: “We detected unusual access. Can you explain?”

- If legitimate: Log override, whitelist for future, close

- If not reached: Escalate to CISO; prepare for revoke

- Review user’s role, approvals, access history (past 30 days)

- New access that wasn’t approved? Revoke it.

- Accessing data outside their department? Investigate intent.

- Check if credentials compromised (failed login attempts, VPN logins from multiple IPs simultaneously)

- Review data accessed: Is it a known-good subset, or something unusual?

CONTAINMENT (30–60 min):

IF CONFIRMED EXFILTRATION ATTEMPT:

- Revoke all active sessions for user

- Reset passwords (force new one at next login)

- Revoke VPN + API tokens

- Quarantine user’s device (prevent further access via endpoint DLP)

- Alert Legal & HR (if termination or investigation needed)

- Scan backups: Did they copy to USB or cloud before we detected?

IF COMPROMISED CREDENTIAL:

- Check logs for data accessed during compromise window

- Notify affected customers (if PII exposed)

- Initiate password reset for entire team (if service account)

POST-INCIDENT (24–48 hrs):

- Determine root cause: Social engineering? Phishing? Insider threat?

- Update policies: Close detection gaps

- Communicate findings to leadership + board (if material)

- Review user access: Revoke unnecessary permissions (“least privilege”)

- Lessons learned: Update playbook; train team

REPORTING:

- Document in centralized incident log (immutable, audit trail)

- Report metrics: Time to detect, time to contain, data exposed, cost

avoided - Share de-identified summary in SOC team meeting (learning opportunity)

B. Policy Template: Data Access Request & Approval Process

PROCESS: Requesting Access to Sensitive Data

USER INITIATES REQUEST (via IGA portal):

- Select data asset (from catalog)

- State reason: “I need customer list for Q4 campaign”

- Specify duration: “30 days (Nov 1 – Nov 30, 2026)”

- System shows: Classification, policy requirements, approval chain

SYSTEM EVALUATION (automated):

- User’s role + data sensitivity → Approval path determined

- If low-risk (e.g., sales rep accessing customer names): Auto-approve

- If medium-risk (e.g., vendor accessing cost data): Manager approval

- If high-risk (e.g., contractor accessing PII): Manager + CISO + Legal

- If very high-risk (e.g., finance access executive payroll): Require MFA + C-Level approval

APPROVAL CHAIN:

Manager Review:

- Confirm user’s role justifies access

- Duration reasonable?

- Approve/Deny (comment if denying)

CISO Review (if flagged):

- User’s risk score normal?

- Time-based or context-based risk (e.g., 3 AM access)?

- Approve/Deny

Compliance Review (if regulated data):

- Data handling rules followed?

- Encryption/audit logging in place?

- Approve/Deny

POST-APPROVAL:

- Access granted for specified duration

- Masking applied (if necessary): e.g., hide cost fields from sales

- Token issued (time-bound, single-use if possible)

- Access logged: User, data, duration, actions taken

- Reminder: “Access expires Nov 30; renewal request by Nov 25”

AT EXPIRATION:

- Access auto-revoked

- Audit report generated: “User X accessed Y records, exported 3 files”

- Data deletable or retention enforced (per policy)

EXCEPTIONS:

Emergency Access: CISO can grant immediate access (4-hr max) with post-hoc justification. Logged, audited, reported to board.

C. Quarterly Review & Roadmap Update Template

QUARTERLY DATA-CENTRIC SECURITY REVIEW: QX 2026

EXEC SUMMARY:

- Maturity: Crawl → Walk transition (Month 9 of 24-month roadmap)

- Incidents prevented: 12 (est. cost avoided: $2.1M)

- Compliance: 100% ready for GDPR audit (in Jan 2027)

- Spending YTD: $650K (on budget)

SCORECARD: Crawl → Walk Milestones

Foundational Visibility (Crawl Phase) — ✓ COMPLETE

- Data discovery (80%+): ✓ 1.2 PB classified

- Encryption baseline: ✓ 100% of critical data

- Audit logging: ✓ 6+ months retained

- Policies drafted: ✓ Approved by leadership

Policy Automation & Integration (Walk Phase) — ⏳ IN PROGRESS (75%)

- ABAC policies live: ✓ 70% of data

- Data masking: ✓ 95% of PII in non-prod

- DLP & blocking: ✓ 18 exfil attempts stopped

- SIEM integration: ✓ Real-time alerts active

- Incident automation: ⏳ 30% of playbooks live

- CASB deployment: ⏳ 2 of 5 cloud apps covered

KEY ACHIEVEMENTS THIS QUARTER:

- SOC detected & blocked 12 exfiltration attempts (vs. 0 last quarter)

- Reduced time-to-detect anomalies from 18.5 min → 4.2 min (77% faster)

- AI team now safely accesses 500GB de-identified customer data (was blocked)

- Compliance team reports 100% audit-log coverage (vs. 60% last quarter)

- Executive team gained confidence: Data-centric controls actually work

ISSUES & MITIGATION:

🔴 Issue: Incident response automation slower than expected

Root cause: SIEM-to-IAM integration had API latency

Resolution: Optimized API calls, reduced latency 500ms → 50ms

Status: Resolved; playbooks re-tested

🟡 Issue: High false-positive rate in DLP (5% → now <2%)

Root cause: Over-tuned rules blocked legitimate business

Resolution: Refined rules; added risk-context from IAM

Status: In progress; targeting <1% by Q1

UPCOMING RISKS & MITIGATION PLAN:

- Winter holidays: Reduced SOC staffing → Hire temp analyst + auto-response

- Cloud migration (Feb 2027): Ensure data-centric controls follow data to cloud

- GDPR audit (Jan 2027): Rehearse audit; ensure evidence automated

BUDGET & SPEND:

YTD: $650K (on budget)

- Tools & licensing: $380K

- Staff (architect, engineers, analysts): $220K

- Training & consulting: $50K

Q1 2027: $200K (UEBA deployment, automation)

Cost avoidance (prevented breaches): ~$2.1M

NEXT QUARTER PRIORITIES (Q1 2027):

- ✓ UEBA production-ready (baseline 100% of users)

- ✓ Auto-response: 50% of incidents auto-remediated

- ✓ Data lineage: Impact analysis query <2 sec

- ✓ Continuous compliance: Reduce audit work by 40%

ORGANIZATIONAL CHANGES:

Proposed: Hire 1 additional SOC analyst + 1 data governance specialist (needed for Walk → Run phase scaling)

Timeline: Hiring starts Q4; onboarding Q1 2027

STAKEHOLDER FEEDBACK:

✓ CEO: “Great progress. When do I see fraud reduction tied to this?”

→ Response: Added KPI to dashboard; target 10% reduction in Q1 2027

✓ CFO: “ROI is clear. What’s the path to lower the $200K/quarter run cost?”

→ Response: Automation in Run phase will reduce manual reviews by 40%

✓ CTO: “Data teams want fast access to data for ML. Are we blocking them?”

→ Response: DCS enables *safe* access; 500GB now available (was zero)

APPROVAL & SIGN-OFF:

CISO: ________________________ Date: ________

Chief Architect: ________________ Date: ________

CFO: ___________________________ Date: ________

Conclusion

This playbook translates data-centric security from strategy to execution. It is designed for CISOs and chief architects who need to:

- Align the organization (RACI, operating model, governance)

- Design the system (reference architecture, control patterns, integration)

- Execute the roadmap (phased maturity, quick wins, metrics)

- Defend the business (incident response, compliance, continuous improvement)

Start with the first 90 days. Get visibility, classify data, enable audit logging, and deploy a playbook. Build trust and momentum. Then scale to policy automation (Walk phase), and finally AI-driven resilience (Run phase).

The data is your perimeter now. Defend it.

For more information on TechVision’s data-centric security research and consulting services, contact: [email protected]